Why Lab-Grown Brain Cells Might Never Become Conscious

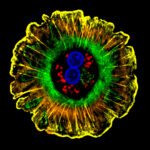

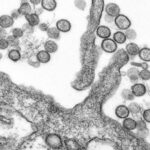

It’s been more than a decade since scientists first started publishing papers on neural organoids, the small clusters of cells grown in labs and designed to mimic various parts of the human brain. Since then, organoids have been used to study everything from bipolar disorder and Alzheimer’s disease, to tumors and parasitic infections. Because these new tools have the potential to reduce the use of animals in research — a goal of the current Trump administration — the field’s future may be more financially secure than other areas of scientific research. In September, for example, the federal government announced an $87 million investment into organoid research broadly.

Matthew Owen brings a unique perspective to this emerging field. As a philosopher of mind, he focuses on trying to understand both what the mind is and how it relates to the body and the brain. He draws on the work of historical philosophers and applies some of their ideas to modern-day science. In 2020, as a visiting scholar in a neuroscience lab at McGill University, he was introduced to researchers working with organoids. Owen, who also does research in bioethics, wanted to help them address a perhaps unsettling question: Could these miniature cell clusters ever develop consciousness?

Philosopher Matthew Owen focuses on trying to understand both what the mind is and how it relates to the body and the brain.

Visual: Courtesy of Matthew Owen

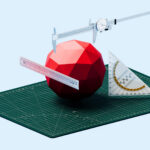

Some experts believe that organoid consciousness is not likely to happen anytime in the near future, if at all. Still, certain experiments are prompting the question. In 2022, for example, researchers, including Brett Kagan of the Australian start-up Cortical Labs, published a paper explaining how they had taught their lab-grown brain cells to play a ping-pong-like video game. (Because the cells were placed in a single layer, the structures were not technically organoids, though they are expected to have similar capabilities.) In the process, the authors wrote, the tiny cell clusters displayed “sentience.” Undark recently spoke with Owen about this particular experiment and about his own writing on organoids.

Owen is a faculty member in the philosophy department at Yakima Valley College and an affiliate faculty member at the University of Michigan’s Center for Consciousness Science. The interview was conducted over Zoom and has been edited for length and clarity.

Undark: What are some studies that have prompted people to wonder whether organoids might one day develop consciousness?

Matthew Owen: Brett Kagan and colleagues claimed in their 2022 study to have demonstrated that a single layer of cortical neurons can self-organize activity to display intelligent and sentient behavior when embodied in a simulated game world. While the report regarding that study is alarming, I think the researchers’ use of the term “sentient” should be interpreted as “adaptively responsive to stimuli,” not as “sensing” or “feeling.” I think [these neurons] learned in the same sense that an algorithm learns that I like to watch ski videos and, therefore, adapts accordingly and starts sending me ski videos to watch on my iPhone. Or the way in which a grapevine learns to grow up a trestle.

UD: In a January paper, you and your co-authors described two different views of what consciousness is and how it relates to the brain. On the one hand, we can think of consciousness as essentially the patterns of brain activity that are present in humans and other animals. Using this definition, if scientists could just somehow replicate those patterns in an organoid, then the organoid would be conscious.

On the other hand, some might say it’s not the brain per se that’s conscious, but rather the subject — the human who possesses the brain. Using this definition, organoids can never become conscious because they are just a part, and not a whole human. Am I understanding this correctly?

MO: That’s a good recap of our article. The main issue at the end of the day is, how does consciousness relate to its neural mechanisms? One view would say that consciousness is the neural mechanisms in the brain that are present whenever consciousness is present.

But if consciousness is a capacity of subjects that is manifested using the brain — and is not identical to neural processes in the brain, and is not a capacity that arises from those neural processes, but rather uses those neural processes — then the question of whether or not a subject is present, or whether or not brain organoids are subjects, is a vital question. If you think that the answer to that question is “no” — that they’re not subjects — then they could never possibly be conscious.

As the philosopher Mihretu Guta puts it, consciousness is not a free-floating property, but it is always a property of a subject. Or we could say it kind of like how Descartes said, “thinking requires a thinker.”

UD: That’s an interesting comparison to “thinking requires a thinker.” I see what you’re saying, because we just talked about how non-living things can learn, which may be considered thinking — I go back to algorithms.

MO: You can see how there are significant parallels here between detecting consciousness or just the question of whether or not AI could ever become conscious, or computers could ever become conscious, and whether or not brain organoids could become conscious. Now, I think that it’s far more likely that brain organoids could become conscious, just because they have a lot more in common with the neural networks in our brains that we already know correspond to consciousness.

I don’t want to come across as dismissive about the question of whether or not brain organoids could become conscious. I think that it’s a really important question. But the more I’ve done research on this topic, the more doubtful I’ve become.

UD: When I share in casual conversation that some research teams have built brain organoids that can learn to play simple video games, I’m sometimes asked whether this kind of research is ethical. There’s something about cultivating human cells and then teaching them to learn that seems almost parental. Is this because of how the research gets reported in news articles? Are we wrong to ask about the ethics of this?

MO: I think it is certainly not wrong to ask about the ethics of this. Whether we are scientists doing research, bioethicists guiding research, or taxpayers funding research, we have a moral obligation to think through the ethics of such research. But to do that well, we must recognize that there are different senses in which something might learn, and some of those senses of the term “learn” are morally significant and imply conscious learning, and other senses do not.

I think that it’s most likely that human brain organoids are not learning in a conscious sense, or a morally significant sense, but are learning in the same way that algorithms learn, or that we might say that plants learn in the sense that they adapt to their environment. But even if that’s the case, we still have a moral obligation to ask these questions and to go about doing scientific research and funding scientific research in an ethically responsible manner, which requires ethical reflection about what we’re doing.

UD: Speaking of funding: The National Institutes of Health has implemented new policies to reduce the use of experimental animals by replacing them with new approaches, including brain organoids. As a bioethicist, how do you weigh the tradeoffs between using animals versus using organoids in research?

MO: If brain organoids are not capable of being conscious — and are only human in the sense that they are grown from induced human pluripotent stem cells — then I think using brain organoids is far more ethical than using animal models because animal models are conscious subjects.

Brain organoids can be a good step forward, assuming they are grown from induced human pluripotent stem cells. That allows us to sidestep ethical concerns about the use of human embryos, thereby safeguarding public trust in science.

UD: Is there anything else that you’d like to add?

MO: I would summarize the level of worry we should have about whether brain organoids could be conscious in the following way: We should be far more worried that brain organoids could be conscious than we are about AI being conscious since brain organoids have far more in common with our brains than artificial neural networks.

But we should be far less worried that brain organoids are conscious than we are about human fetuses being conscious, since fetuses originate via a biological process known by every parent on the planet to produce a subject capable of being conscious. That’s the easiest way in which I can summarize how we should evaluate the ethical risks involved.