Reefer Madness 2.0: What Marijuana Science Says, and Doesn’t Say

Scientific research on the effects of marijuana is rife with holes, thanks in large part to it still being categorized at the federal level alongside drugs such as heroin and LSD. Unfortunately, when research is scarce, it becomes easier to mislead people through cherry-picked data, sneaky word choice, and misinterpreted conclusions.

THE TRACKER

The Science Press Under the Microscope.

Which brings us to Alex Berenson and Malcolm Gladwell, and what happens when tidy narratives outrun the science.

Two weeks ago, Berenson, a former New York Times reporter and subsequent spy novelist, published a book with the ominous title “Tell Your Children: The Truth about Marijuana, Mental Illness, and Violence.” Gladwell, meanwhile, published a feature in the New Yorker, where he is a staff writer, drawing largely on Berenson’s book and questioning the supposed consensus that weed is among the safest drugs. Combined, these two works offer a master class in statistical malfeasance and a smorgasbord of logical fallacies and data-free fear-mongering that serve only to muddle an issue that, as experts point out, needs far more good-faith research.

Berenson’s main argument is relatively simple. In his book, he claims, essentially, that the existing evidence really does contain solid answers, painting a truly alarming picture about marijuana: That it can and does cause psychosis and schizophrenia. He then makes the leap that since psychosis and schizophrenia can lead to violence, marijuana itself is causing violence to increase in the United States and elsewhere.

Arriving at that conclusion requires a host of scientific and statistical sins. But first, it’s worth stating that there really is some research linking cannabis and schizophrenia. A 2017 National Academies of Sciences, Engineering, and Medicine (NASEM) report, which synthesized all the available research on marijuana, concluded in part: “There is substantial evidence of a statistical association between cannabis use and the development of schizophrenia or other psychoses, with the highest risk among the most frequent users.”

Berenson takes this conclusion and runs with it, far beyond what the researchers seem willing to say. In a 15-year study in Sweden, for example, experts call cannabis “an additional clue” into how schizophrenia forms, and point out genetics and other factors that confuse the issue. And last May, Aaron Carroll, a pediatrician who frequently writes about health care policy and research, noted in The New York Times that the “arrow of causality” between marijuana and psychosis is difficult to pin down.

One of the authors of the NASEM report, Ziva Cooper of the Cannabis Research Initiative at the University of California, Los Angeles, recently told Rolling Stone: “To say that we concluded cannabis causes schizophrenia, it’s just wrong, and it’s meant to precipitate fear.” To Carroll, again in The New York Times, Cooper added there isn’t yet evidence that clarifies the direction of the association. Put simply, existing data can’t answer fundamental questions like the following: Does smoking marijuana spark the onset of schizophrenia, or are people on the cusp of developing the disease simply more likely to smoke marijuana, either to self-medicate or for other reasons?

And that’s the topic that Berenson probably got least wrong. On virtually every issue in his 272-page book, he commits one of the most common logical errors: He mixes up correlation and causation. The classic example: Crime tends to spike in the summer; so does ice cream consumption. Did all that ice cream cause the crime? Of course not.

An important piece of Berenson’s argument is that rates of marijuana use have risen at around the same time as an increase in diagnoses of schizophrenia and other forms of psychosis. For example, separate studies from Finland and Denmark show an increase in such diagnoses in recent years. The authors of both studies wrote that the increases could be explained by changes to diagnostic criteria, as well as improved access to early interventions. In both cases, the authors do not rule out an actual change in incidence. But Berenson makes that possibility seem a firm reality, and that the rise in marijuana use is responsible. There is no real evidence that he’s right.

Berenson’s connection of marijuana to violence seems even more tenuous. He writes that violence has increased dramatically in four states that legalized marijuana in recent years: Alaska, Colorado, Oregon, and Washington. He notes that the number of violent crimes in those states has increased faster than the rest of the country between the years 2013 and 2017. On its face, he’s not wrong, but this is a great example of the liberties one can take with numbers.

For starters, Berenson bases his calculations on the total number of these crimes in his chosen states, rather than the rates per 100,000 people, which would account for population differences. According to the FBI, Colorado’s murder rate actually increased more slowly than the national rate from 2013 to 2017.

Even in states where Berenson’s assertions hold true, his numbers don’t provide the whole story. For example, Washington state had a murder rate of 2.3 per 100,000 people in 2013, and 3.1 in 2017 — an increase of 35 percent, compared to a national increase of around 18 percent. But this isn’t some clear, straight-line trend: The murder rate in Washington state actually fell by around 7 percent from 2015 to 2016. How does that fit in to this narrative? You can goose the numbers by changing your starting point as well: The increase in the murder rate from a particularly violent 2012 through 2017 was only around 3 percent, much less than the national increase for that period. Possession of up to one ounce of weed for personal use became legal in Washington in late 2012, and the first recreational use stores opened in 2014. Which murder rate do you use?

And all that ignores the basic fact that even if those states are seeing increases in violence that outpace some others, that says nothing about why. Could it be partially due to the legalization of marijuana? Sure. Could it be completely random statistical noise? Also sure.

There are dozens more examples, often involving an oversimplification or outright misrepresentation of a study’s conclusions. Berenson suggests marijuana raises the risk of heart attacks (experts: evidence is insufficient), and that legalization spawns more fatal car crashes (experts: some studies have shown no difference from states without legalization, and testing positive doesn’t necessarily mean a driver was impaired at the time in any case). There are also questionable claims about rates of cannabis use in Colorado, the possible existence of fatal marijuana overdoses, and maybe most strikingly about potential connections — positive or negative — to the U.S. opioid crisis.

Some of these might be honest errors, but twisting the science so badly on so many studies suggests Berenson was trying to fit evidence to a predetermined thesis, rather than letting the data guide him. The errors are so numerous that this book, to be quite honest, deserves to be ignored. Unfortunately, after coverage in places like the New Yorker, it’s too late for that.

While “Tell Your Children” has problems, Malcolm Gladwell’s feature compounds them. It is little more than a glorified book review, relying heavily on a single source and failing to vet it in any meaningful fashion. Gladwell repeats shoddy statistics, jumps from one straw man argument to the next, and generally fails at the basic functions of a journalist. He does all this in the guise of the “just asking questions” truth-teller.

Gladwell wants you to think that he is just pointing out what’s missing in marijuana research, writing, for example: “Low-frequency risks also take longer and are far harder to quantify, and the lesson of ‘Tell Your Children’ and the National Academy report is that we aren’t yet in a position to do so.” But Berenson is doing the opposite: He is claiming — explicitly throughout the book — that the answers we’re looking for are here, and they say the opposite of what they actually say.

Nonetheless, Gladwell offers up Berenson’s same misleading statistics on violence without interrogating their accuracy, mentions two studies suggesting a gateway effect while ignoring plenty of contradictory research, and highlights some data generated by a New York University professor and friend of Berenson’s, which, while they may be valid, have not been peer reviewed or otherwise assessed by experts. Gladwell even misrepresents the professor, calling him a statistician, when his expertise is actually in politics and law.

In some spots, Gladwell is just regurgitating what he read with no context or input from other sources or experts. On the increased potency of modern-day weed compared to the 1960s and 1970s, here’s Berenson: “Imagine drinking martinis instead of near-beer to get a sense of the difference in power.” Now here’s Gladwell, describing that same increase: “… from a swig of near-beer to a tequila shot.” He changed the liquor, you see, so it’s fine.

Both writers make it seem as though they’re shining a light on a dark, unexplored corner of science and public policy. But they even take liberties with the claim that no one is paying attention. “Almost no one noticed the National Academy Report,” Berenson writes. Gladwell repeats the claim, saying it “went largely unnoticed.” An extremely quick Google news search reveals pieces on the report from Business Insider, Vox, NPR, Forbes, Quartz, and The Washington Post, just to name a few that came out at the time of its release. The New York Times, Berenson’s former employer, printed an editorial about the report, calling for rescheduling the drug in order to make research easier. What would people “noticing” actually look like?

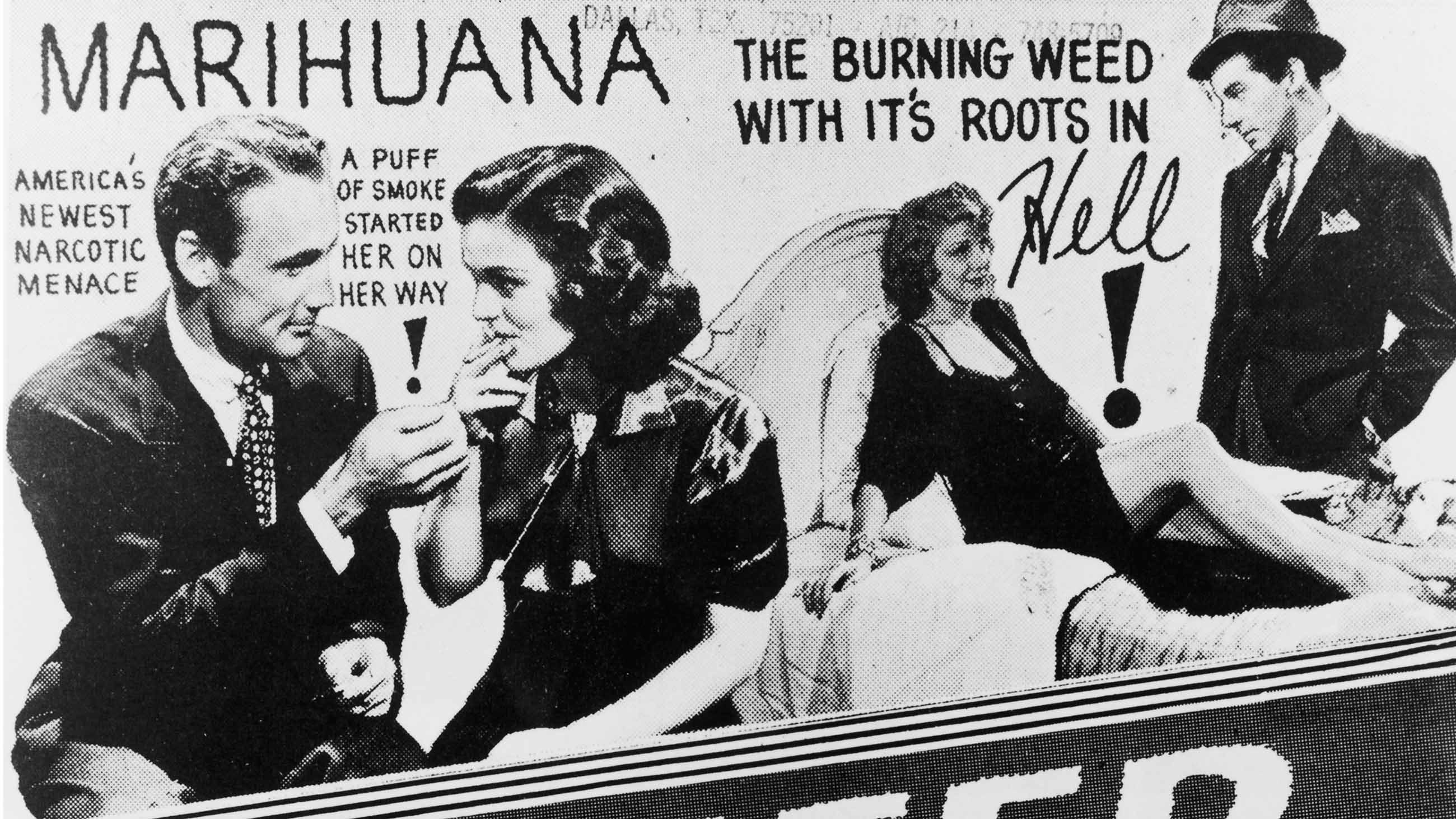

There is one point Gladwell gets right. In his piece, he writes that Berenson has “a novelist’s imagination.” Berenson repeatedly mentions how his book will likely spawn references to the infamous anti-weed propaganda film “Reefer Madness,” as if preemptively pointing out what other people will say about you somehow proves them wrong. But the book does come across as theater, from the stark title and the scary, smoke-tinged, red-on-black cover, to the author’s reliance on scary anecdotes.

The book opens with a horrific story of an octuple murder committed in 2014 in Cairns, Australia. Berenson spends entire chapters on gruesome tales of murder and violent psychosis, of course all linked back to smoking pot. At one point, he even acknowledges the scientific maxim that “the plural of anecdote is not data,” but then proceeds with an argument of majestically twisted logic. If the data really do exist, he says, then the anecdotes are sure to follow. How the anecdotes he supplies somehow prove the existence of data is unclear.

The debacle provides a cautionary tale for other science writers: Avoid these sins of omission, misrepresentation, and cherry-picked data. Do the work to find many credible sources for each story, don’t rely on a single flawed book. Scientists, unlike many in the general public, are perfectly comfortable with uncertainty, and with addressing gaps in knowledge. Part of the challenge for any good journalist is to reflect these uncertainties and nuances clearly and honestly — not to squeeze a compelling, utterly false narrative from uncooperative data.

Clearly, neither Berenson nor Gladwell were up to the task.

Dave Levitan is a freelance journalist based in Philadelphia who writes about energy, the environment, and health. He is the author of “Not A Scientist: How Politicians Mistake, Misrepresent, and Utterly Mangle Science.”

Comments are automatically closed one year after article publication. Archived comments are below.

The most shocking thing about both works was how similar their words were to the hysterical nonsense published in the 1930s. Nothing has changed, we’re stuck in a loop where fake is real and real is irrelevant; the insane have taken over the asylum. The Hoax Papers, Big Fat Surprise, Reefer Madness, these works demonstrate the industry of scientific study is built on hiding its flaws. There are so many writers I’ve lost respect for in recent years by reading their misinformed views on cannabis. Again, and again, I see wild leaps of imagination parading as fact, and always in a slightly condescending tone, as if it’s okay, it’s the adults talking now … clueless clowns.

Let’s be fair now. The term ‘Reefer Madness 2.0’ was coined eight years ago by my friend and colleague Derek Williams. Credit where credit is due. https://ukcia.org/wordpress/?p=924

does anyone still think Malcolm Gladwell has any credentials to write about science? moreover all of this debate is off point: we all know WITH STATISTICS that the most dangerous drugs in terms of death, homicide, and premature mortality are alcohol and tobacco

I think the author doesn’t knows or works with enough people or smoke regularly or smokes himself to see reality. I don’t need a study to recognise someone who’s a long term regular smoker. Including my sister and brother in law. The problem is that there are know long term, 20 years or more, studies.

I think this topic is much ado about nothing important, in reality. Alex Berenson worked for the New York Times as a corporate hack, propagandizing. As an author? He does basically the same thing. Trash novels. Garbage, escapist fantasies full of duplicity and violence that uphold the “John Wayne” ideal Americans have been conditioned to believe in…

One could make the case that both Berenson and Gladwell are functionally insane, as are the majority of Americans. They carry on with business as usual while the Earth is rapidly becoming a planet that will not support Human beings or oxygen-breathing life forms. The science is clear, and indisputable. Physics will always have its way and natural law does rule this planet’s geological processes, not any politicians or billionaires. Humanity is faced with extinction in the near-term future, but Americans are more concerned with peoples’ personal behaviors and choice of life styles…

That being said, as a master “Cannabotanist, grower, breeder, and life-long medicinal user with over 50 years personal knowledge of cannabis, in my own experience, through observation of several different friends over the years, I have seen some correlation between cannabis use and people developing schizophrenic behaviors and paranoia, or becoming chronically depressed.

In particular, a dear friend and fellow ethnobotanist and Cannabis user and grower developed paranoid schizophrenia and ended up having to be hospitalized. It took a period of about 2 to 3 years, and started after he’d gotten obsessive about ‘Sativa’s’ and the high THC, more cerebral effects. As I followed him and investigated with other friends and people in our Colorado Cannabis “affinity group,” I found that the few other people who admitted to seeking treatment for mental healthy issues also had a strong preference for pure sativa’s or sativa dominant strains.

It was a disturbing realization to me personally, but damn sure worth being aware of. I changed my own usage after that finding, and started breeding for a more balanced cannabinoid profile. Since reading this article and contemplating the local cannabis crowd over the past few years, I think that there is a clear danger of mental health issues for a SMALL PERCENTAGE of people, who I would imagine are likely predisposed to mental instability; and that High THC with little to no CBD/CBN to act as a “buffering agent” is a potent psychoactive and not to be taken lightly or unconsidered.

This is only my personal experience, and not any recommendation or advice. I don’t advocate Cannabis use to anyone. It’s a personal choice and should be an informed choice made by an educated adult, in my opinion. I will say that being an “Aspie” (Autistic spectrum, Asperger’s syndrome), Cannabis has been the most beneficial medication of all of the many pharmaceuticals I have experienced. But, like anything else people might could make use of, it is most definitely not for everyone. Do your own research and piss on the fascist sympathizers like Berenson and Gladwell. They don’t have anything to say but the same old bullshit and lies.

A company called Strainsforpains is showcasing a demo of an app coming out soon that will revolutionize how doctors, patients and dispensaries look at education choices in pain management. This company allows the patient with any of 100 illnesses or pains to see scientifically why a specific brand or strain is best for their pain management, and not just what a dispensary would ‘recommend.’ There is no equivalent on the market. Not in Canada and not here.

Never let the facts get in the way of disseminating an effective piece of hysterical rhetoric”

~~ The Prohibitionist’s Motto

It seems like the author of this article isn’t aware of the context for these pieces. In his world I guess things are different.

In my world there is a constant stream of statements about how marijuana has no negative effects – and that the lack of bad effects is a known thing. The only reason why marijuana could be illegal is because people are puritanical, racist, or both.

In that context both of these pieces are pointing out that the data to show there are no negative effects is NOT there – regardless of how many hundreds of thousands of times it has been stated. There may even be evidence to show that there are negative effects – just correlation, not causality but still.

The point of both pieces is that the current ‘common knowledge’ is not based in any evidence.

schizophrenic people diagnosed, who are in treatment are much more likely to be victims. People not diagnosed and those who refuse treatment? it is effective that delusions do not contribute to healthy interactions, but we wonder It is worth saying that there really are some researches that relate cannabis and schizophrenia “in its moment as an important point it should exist.

It’s the other way around; if someone has schizophrenia or another mental disorder, they’re more likely to use marijuana to self medicate. It may bring underlying mental illnesses to the surface for people who would have developed the condition further down the road regardless of their prior marijuana use.

That might actually explain the correlation, if they gravitate towards it for self-treatment.

Diagnosed. Critical distinction. Diagnosed schizophrenics in treatment are more likely to be victims. Undiagnosed folks, and those refusing treatment? Well, delusions don’t make for healthy interactions, and as the daughter of a schizophrenic dianosed by the military and then discharged into the world without follow up, I should know. I also know that “But first, it’s worth stating that there really is some research linking cannabis and schizophrenia.” means more to me than all the valid criticisms of the writing. Just get the data. Now. And stop prescribing it to vulnerable teens/ young adults until you do.

In Holland, marijuana has been in regular use for decades. Why not just pull the academic research from there?!

Martin Ball’s perspective in the chapter “A Few Notes on Other Substances” at the end of his book called Entheogenic Liberation –

https://www.amazon.com/Entheogenic-Liberation-Unraveling-Nonduality-5-MeO-DMT-ebook/dp/B074CP77JQ/ref=sr_1_fkmrnull_1?crid=3408AFCAL41PL&keywords=entheogenic+liberation&qid=1548129816&sprefix=entheogenic+libe%2Caps%2C205&sr=8-1-fkmrnull

I hate the “people with schizophenia are criminals” trope. They’re more likely to be victims of violent crime. This just increases the stigma of mental illness.

According to “Epidemiology of Schizophrenia” on Wikipedia, most countries with high cannabis use have some of the lowest rates of schizophrenia. The Netherlands are 173rd, Canada 177th, USA 181st, UK 185th, Iceland 191st and Australia last 192nd (Iceland is said to have the highest rate of marijuana/cannabis use in the world). The country with the lowest rate of cannabis use in the world, Singapore, is said to have the 7th highest rate of schizophrenia.