Medical Imaging Goes to the Movies

Last fall, the Ars Electronica Center in Linz, Austria — nicknamed the Museum of the Future — became something of a classroom for college students training to be X-ray technicians and physical therapists. Amid exhibitions of satellite images and 3D printed objects, the students sat in front of an IMAX-size movie screen, donned 3D glasses and viewed medical images of various diseases, while a complex of blood vessels and muscles appeared to ease toward them and then recede.

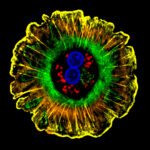

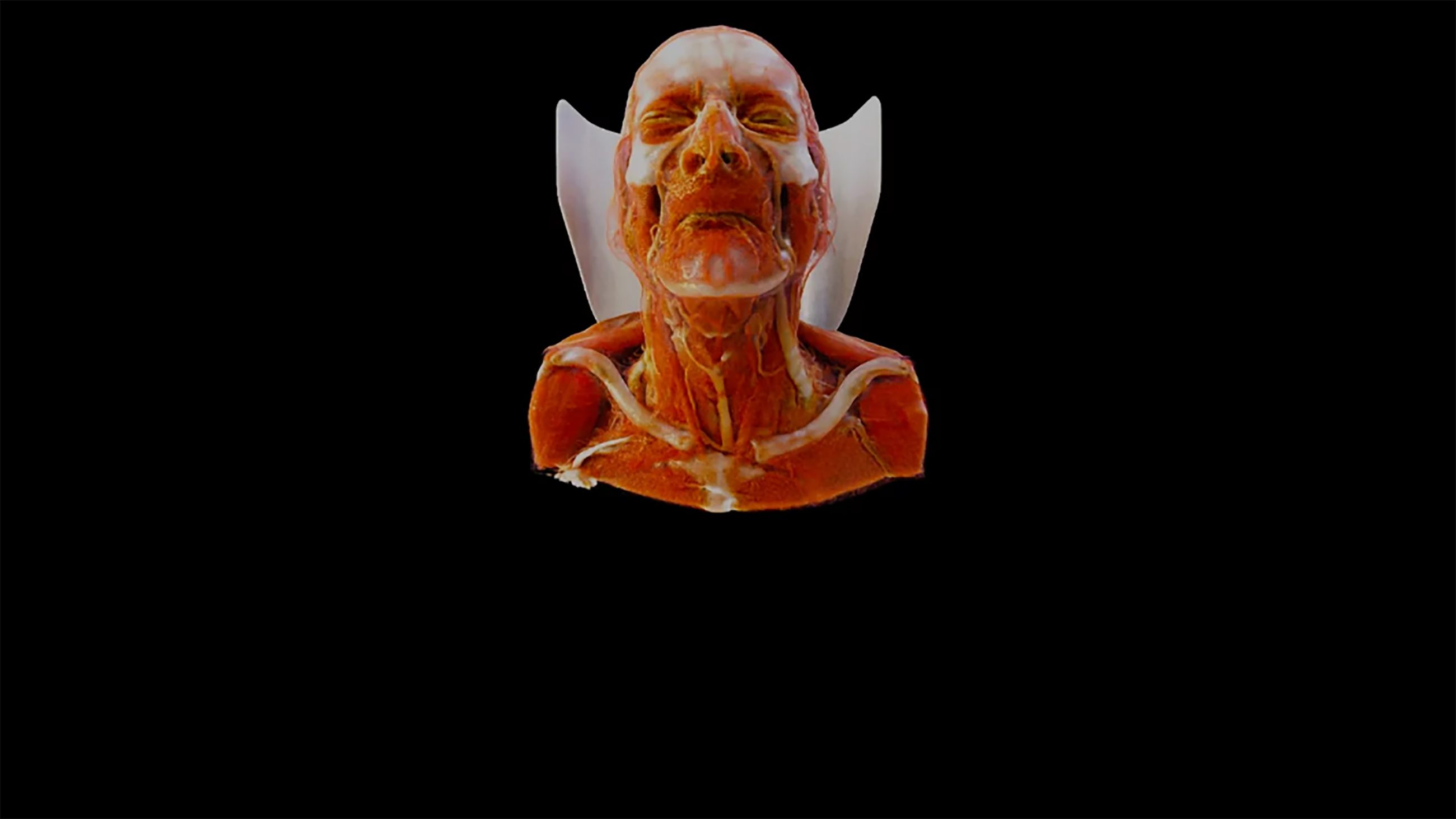

For all of the technological wizardry on display, what really set the experience apart from typical anatomy lectures was that the medical images looked exceedingly real. Students in the audience could discern discrete muscle fibers, they could see light playing across the moist and uneven surfaces of individual organs, and they could follow along the myriad twists and turns of blood vessels.

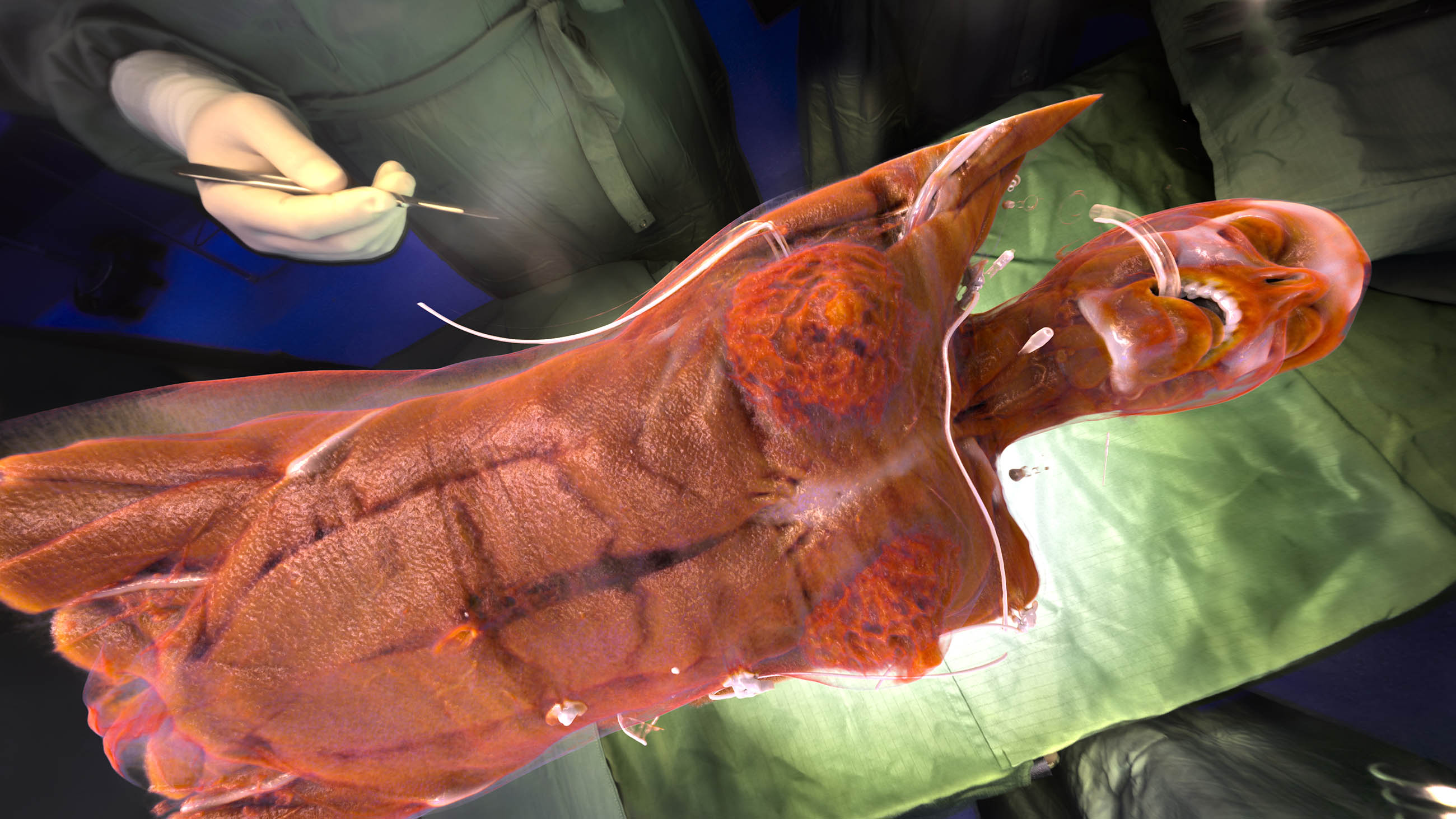

“It is really fantastic,” recalled audience member Daniel Schneeweis, 20, who is studying for a bachelor’s degree in X-ray technology at the University of Applied Sciences for Health Professions Upper Austria, “because it looks like you have in front of you a patient at the operating table.”

Since the dawn of CT scans (short for computed tomography) and magnetic resonance imaging (MRI), medical image specialists have been able to compile and model increasingly realistic images of the interior of the human body. But the images on display in Austria were of a novel variety that, its developers hope, could bring a new level of verisimilitude to the study of human anatomy — and perhaps even to surgeons preparing to put that anatomy under the knife. It’s one of a number of new medical imaging technologies that promise to bring benefits to both doctors and patients.

“We asked ourselves how can we go further,” said Klaus Engel, a Germany-based researcher who helped to spearhead the new technology on display in Austria, which is now being tested in several dozen medical centers around the world. “How can this be even more realistic, and how can we target a more general audience?”

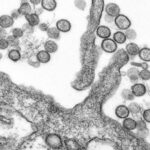

To be sure, medical imagery has already come a long way over the last few decades. A typical CT or MRI scan produces hundreds to thousands of cross-sectional images of the region of the body being scanned. Computer software can then compile those two-dimensional pictures into a three-dimensional rendering using a variety of methods, the most common of which is called volume rendering. Since the late 1980s, everything in that imaging production chain has become more sophisticated: CT and MRI machines can take higher resolution pictures, computers can store more data, and software packages that pieces the images together use far more complex algorithms than ever before.

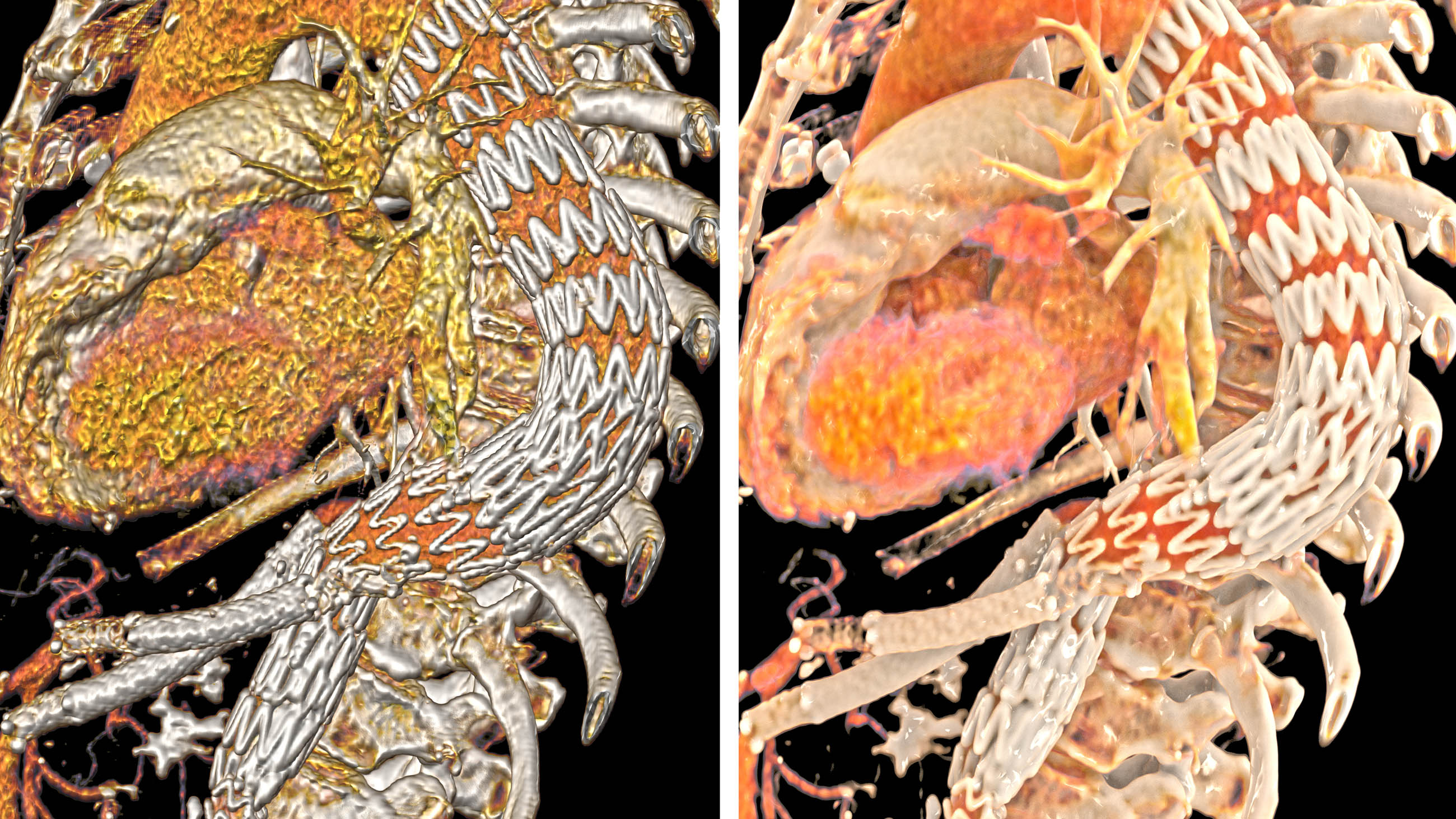

But Engel and his colleagues at the German medical technology firm Siemens Healthineers felt that today’s volume-rendered images still lacked sufficient detail and depth, such that they are often hard for anyone who is not a radiologist to understand. In response, the researchers took inspiration from Hollywood, creating new and more complex ways for rendered tissue to appear to reflect, absorb, and otherwise interact with light — a technique they call “cinematic rendering.”

Existing algorithms assume that light takes one of only a few paths through the body, and they render their images accordingly. Light could be absorbed by dense tissue such as bone, for instance, while passing through the less dense fat or muscle, or bouncing off the tissue surface.

The new method gives the behavior of light many more options. It might pass through some layers of tissue, and yet still interact with others below the surface. And if it bounces off of one surface, it can still interact with surrounding tissues. The result is that bumps, crevices and other features that give surfaces texture are far more apparent. The difference is akin to that between the smooth plastic-and-metal characters in the 1995 film “Toy Story” by Pixar — one of the first developers of any kind of volume rendering method — and those in the 2014 Disney movie “Big Hero 6,” which used a new, more advanced light-rendering software called Hyperion.

Engel and his team also took some inspiration from the filmmakers of “The Lord of the Rings” franchise, who took 360-degree photographs of real-world scenes and then used them to make so-called “light maps” that could illuminate computer-generated characters placed within them. Siemens Healthineers is busy building a library of similar light maps taken in a variety of locations — from a forest, church, subway station, and other locales.

The goal of the light maps is not to make a patient’s body look like it is in the middle of a forest, but to allow clinicians to change how much light is cast on different parts of the medical image. Using some maps — the forest scene, for instance — will provide a rich flow of light from overhead. Others maps, like the illumination bouncing off the many reflective surfaces of a subway station, can wash the body’s organs and tissues in light from a variety of directions. The flexibility can add to the perceived realism of the images — and, the developers hope, make potential problems like tumors easier to spot.

A few years ago, Siemens gave a preview of the technology to Adam Davis, an assistant professor of radiology at New York University Langone Medical Center. “It first struck me as being visually superior, it had greater attention to detail … texture and spatial relationships and less distraction by ambient noise,” says Davis, who does product development for Siemens.

Davis says the company is now supplying him with the software and a computer station at NYU — though they are not otherwise funding him — to start testing cinematic rendering and comparing it alongside traditional volume-rendering.

He and other researchers say that there are some fundamental limitations to the new technology as a diagnostic tool — though it may prove useful in other areas.

Among the challenges, according to Cynthia McCollough, director of the CT Clinical Innovation Center at Mayo Clinic, which evaluates cinematic rendering and other Siemens research products: No 3D image is ever going to take the place of a radiologist looking at the individual 2D pictures from a CT or MRI scan.

Cross sections from such scans show the full range of light signals, capturing anatomical structures in gray scale, McCollough said, and information can be lost when these pictures are compiled into a 3D image and the structures are artificially assigned colors. 3D images can be helpful for summarizing the layout of bones or blood vessels, but are not likely to become a stand-alone diagnostic tool anytime soon, McCollough noted — adding that cinematic rendering could be “an amazing communication tool.”

Doctors, for example, could show patients on a computer screen strikingly vivid images of what their pathology looks like, she explained. “It is huge in terms of helping patients understand what you are going to do to them and telling them what to expect.”

That’s been the experience of Thomas Kroes, a researcher in the Netherlands who developed and presented a similar image rendering software package — still in prototype stage — called Exposure Render, a couple of years before Engel and his team got started. “The biggest enthusiasm is coming from people who have to explain anatomy to surgeons in training,” Kroes said, “and also to patients themselves.”

Christopher Schlachta, a professor of surgery at Western University in Ontario, agreed — at least for those patients who aren’t disturbed by highly realistic imagery of their insides. “It would not be the most uncommon thing that I will review a patient’s [CT] scan with them,” he said. “If I had a 3D image, I think it would be helpful for the patients because they are not trained to read” CT and MRI cross sections.

High levels of detail and varying lighting could also help doctors planning difficult surgeries involving the brain, liver or other organs, where it is critical to avoid blood vessels or other important anatomy, says Schlachta, who performs gastrointestinal cancer surgery. “Once you get into the surgery, you are looking for the things you remember from the image review you did beforehand,” he said, “so the more real the image looked, the easier it is going to be for the surgeon to remember.”

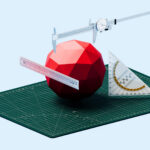

Often surgeons’ mental images come from 2D pictures, which is the only type of data available in many hospitals — although advances that go well beyond cinematic rendering are already being developed on this front, according to Lawrence James Rizzolo, a professor of surgery at Yale School of Medicine. These include anatomical structures printed in 3D, and holographic medical images that float in front of a screen, giving surgeons opportunities to physically interact with virtual anatomy before surgery.

Both of these tools are also created using data from CT or MRI scans.

Meanwhile, cinematic rendering is finding unexpected adherents in other fields. The practice among forensic scientists in Switzerland, for example, is to take CT and MRI scans of dead bodies to help determine the cause of death and to guide the autopsy. Some of those scientists are now using the trove of data from these scans to compare cinematic rendering with traditional volume-rendering methods. They expect their findings will be published in a medical journal later this year.

“It clearly generates an image that, worst case scenario, conveys the same message and looks better,” Lars Ebert, a forensic imaging researcher at the University of Zurich, said of the cinematic rendering technique. “And in the best case scenario, a better image that highlights something.”

Carina Storrs is a freelance science and health journalist based in New York City. Her work has appeared in a variety of publications, including Scientific American, The Scientist, Discover, CNN Health, Seleni.org and Health.com.