Experts Debate the Risks of Made-to-Order DNA

In November 2016, virologist David Evans traveled to Geneva for a meeting of a World Health Organization committee on smallpox research. The deadly virus had been declared eradicated 36 years earlier; the only known live samples of smallpox were in the custody of the United States and Russian governments.

Evans, though, had a striking announcement: Months before the meeting, he and a colleague had created a close relative of smallpox virus, effectively from scratch, at their laboratory in Canada. In a subsequent report, the WHO wrote that the team’s method “did not require exceptional biochemical knowledge or skills, significant funds, or significant time.”

Evans disagrees with that characterization: The process “takes a tremendous amount of technical skill,” he told Undark. But certain technologies did make the experiment easier. In particular, Evans and his colleague were able to simply order long stretches of the virus’s DNA in the mail, from GeneArt, a subsidiary of Thermo Fisher Scientific.

If DNA is the code of life, then outfits like GeneArt are printshops — they synthesize custom strands of DNA and ship them to scientists, who can use the DNA to make a yeast cell glow in the dark, or to create a plastic-eating bacterium, or to build a virus from scratch. Today there are dozens, perhaps hundreds, of companies selling genes, offering DNA at increasingly low prices. (If DNA resembles a long piece of text, rates today are often lower than 10 cents per letter; at this rate, the genetic material necessary to begin constructing an influenza virus would cost less than $1,500.) And new benchtop technologies — essentially, portable gene printers — promise to make synthetic DNA even more widely available.

But, since at least the 2000s, the field has been shadowed by fears that someone will use these services to cause harm — in particular, to manufacture a deadly virus and use it to commit an act of bioterrorism.

Meanwhile, the United States imposes few security regulations on synthetic DNA providers. It’s perfectly legal to make a batch of genes from Ebola or smallpox and ship it to a U.S. address, no questions asked — although actually creating the virus from that genetic material may be illegal under laws governing the possession of certain pathogens.

Whether that’s a legitimate cause for alarm is under debate. Some experts say that creating a virus from synthetic DNA remains prohibitively difficult for most scientists, and that fears of an attack are often overblown. At the same time, new nonprofit initiatives, fueled by money from Silicon Valley philanthropists, and at times evoking worst-case scenarios, are pushing for more stringent protections against the misuse of synthetic DNA. Implementing effective security, though, is tough — as is enforcing any kind of norm in a sprawling, multinational industry.

“It’s not that I’m worried about something happening tomorrow. But the reality is, this capability is increasingly powerful in terms of how long the DNA fragments can be, what you can create with them, the ability of recipients to then assemble the DNA fragments into a new virus,” said Gregory Koblentz, a biodefense researcher at George Mason University. “This is the kind of thing that we really should be more proactive on — and try to get ahead of the curve.”

Perhaps the most prominent scientist sounding warnings about the danger of unchecked DNA synthesis is Kevin Esvelt, a biotechnologist at MIT. In conversation, Esvelt moves quickly between technical detail and Cassandra-like alarm. He often talks about Seiichi Endo, a Japanese researcher with graduate training in virology who joined the apocalyptic Aum Shinrikyo sect in 1987. Endo helped carry out a poison gas attack on the Tokyo subway, and the group tried — but seemingly failed — to obtain Ebola virus.

Since then, creating pathogens has gotten easier, thanks in part to the wider availability of synthetic DNA. “It’s really hard for me to imagine a graduate-trained virologist from Kyoto University being unable to assemble an influenza virus today,” Esvelt said.

As Esvelt describes it, the problem of synthetic biology is about power: New technologies have handed a group of scientists the keys to build unfathomably dangerous bugs. Very few — perhaps none — of those scientists has any wish to exercise this grim superpower. But, Esvelt argues, it’s only a matter of time before the next Endo comes along.

By Esvelt’s back-of-the-napkin estimate, perhaps 30,000 scientists worldwide have the skills to build a strain of pandemic influenza, provided they can find someone to synthesize the DNA for them. The consequences of unleashing such a pathogen could be catastrophic.

“No individual can make a nuke,” Esvelt said. “But a virus? That’s very doable, unfortunately.”

Not everyone buys those figures. “There are 1000s of virologists, but far fewer with these skills,” virologist Angela Rasmussen wrote on Twitter in November, in a thread suggesting that Esvelt’s work overstates the risks of a bioterror attack. “Infectious clones aren’t something you can whip up in a garage,” she continued — even with a full set of DNA on hand.

Zach Adelman, a biologist who studies disease vectors at Texas A&M University, echoed those points — and questioned Esvelt’s broader approach. “It sounds like his typical scare tactics,” he wrote in an email to Undark. “Could a single, dedicated, malicious individual still make their own flu strain while avoiding detection? Maybe, but even in ideal circumstances these experiments require a substantial amount of resources.”

Even if someone manages to illicitly make a virus, carrying out a bioterror attack is still difficult, said Milton Leitenberg, a biosecurity expert at the Center for International and Security Studies at the University of Maryland. “All of this is unbelievably exaggerated,” he said, after reviewing testimony Esvelt delivered to a U.S. House of Representatives subcommittee in 2021 about the the risks of deliberately caused pandemics.

Still, while experts may differ about the degree of risk, many agree that some kind of security for synthetic DNA is warranted — and that current systems may need an upgrade. “I do think that it’s worthwhile having a way of screening synthetic DNA that people can order, to make sure that people aren’t able to actually reconstruct things that are select agents, or other dangerous pathogens,” Rasmussen told Undark.

For years, some policymakers and industry leaders have pushed to beef up security for DNA synthesis.

In the 2000s, when the gene synthesis industry was in its early days, policymakers grew concerned about potential misuse of the companies’ services. In 2010, the U.S. government released a set of guidelines, asking synthetic DNA providers to review their orders for red flags.

Those guidelines do not have the force of law. Companies are free to ignore them, and they can ship almost any gene to anyone, at least within the U.S. (Under federal trade regulations, exporting certain genes requires a license.) Still, even before the government released its guidelines, major synthetic DNA providers were already strengthening security. In 2009, five companies formed the International Gene Synthesis Consortium, or IGSC. “The growth of the gene synthesis industry depends on an impeccable safety record,” the then-CEO of GeneArt wrote in a statement marking the consortium’s launch.

Consortium members agree to screen their customers. (They won’t ship, for example, to P.O. boxes.) And they agree to screen orders, too, following standards that Koblentz says are actually stricter than the federal guidelines.

But some companies never joined. According to one commonly cited estimate, non-IGSC members account for approximately 20 percent of the global DNA synthesis market. That’s little more than an educated guess. “We don’t really know, to be honest,” said Jaime Yassif, who leads the biological policy team at the Nuclear Threat Initiative, a think tank in Washington, D.C. And some companies, she and other analysts say, appear not to be screening at all.

Indeed, spend a few minutes on Google searching for synthetic DNA, and it’s easy to find non-IGSC companies advertising their services. It’s difficult to tell what kind of security measures — if any — those companies have in place.

In a brief phone call, Lulu Wang, an account manager for the Delaware-registered company Gene Universal, said the company did screen orders. She did not provide details, instead referring additional questions to an email address; the company declined to answer them. KareBay Biochem, a provider registered in New Jersey, did not reply to emailed questions. A man who answered the company’s phone, upon learning that he was talking to a reporter, said “Sorry, I have no comments,” and hung up.

Azenta Life Sciences, a Nasdaq-traded company, provides “complete gene synthesis solutions,” according to its website, after acquiring the synthetic DNA provider Genewiz in 2018. Nowhere does its website mention biosecurity. In an email, Azenta director of investor relations Sara Silverman wrote that the company “performs a biosecurity screen,” but declined to provide details. She did not answer a question about why Azenta had not joined the IGSC.

The industry as a whole has uneven security. “There’s no standardization process, there’s no certification, there’s no outside body checking to see how well your system does,” said James Diggans, head of biosecurity at Twist Bioscience, who currently chairs the board of the IGSC. As a result, he said, “companies invest along a broad spectrum of how much they want to put effort into this process.”

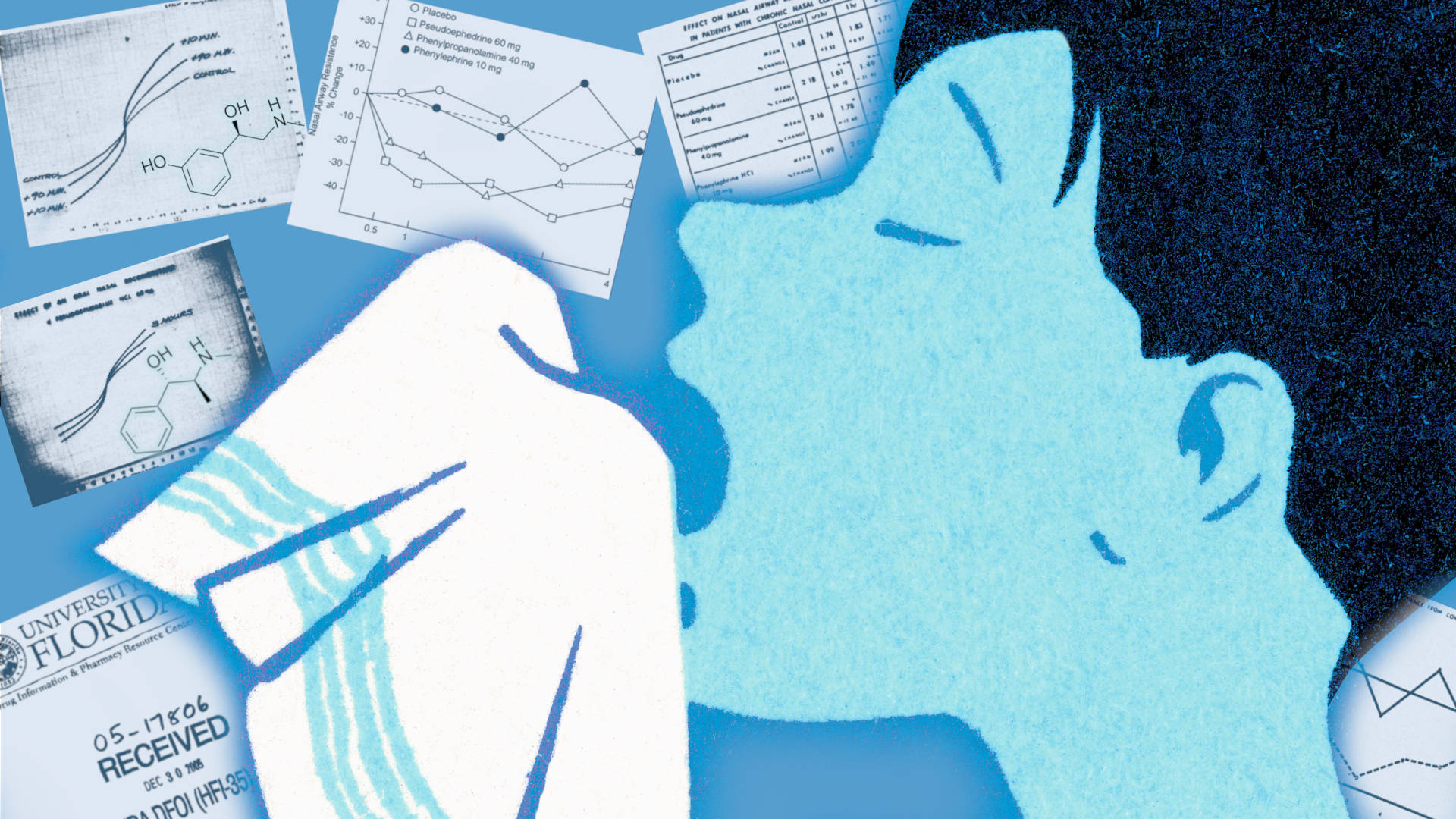

There are also financial incentives to cut corners. Existing DNA screening systems take a strand of DNA from an order and compare it against a database of so-called “sequences of concern.” If there’s a match, a bioinformatics expert reviews the order — a process that is expensive and time consuming. “It is certainly an unfair competitive advantage,” Diggans said, “if you decide not to invest in security, or if you decide to invest minimally in security.”

One solution, some experts say, is to use free, simple, high-quality screening software. In the coming months, two such initiatives are slated to launch.

One system, called SecureDNA, launched as a limited-access pilot this month, with plans to be widely available by the end of 2023. At its core is a database of billions of very short pieces of genetic information, the exact contents of which are a closely guarded secret. A small group of scientists — Esvelt, who is part of the SecureDNA team, calls them “curators” — will eventually maintain and update the tool, which is based in Switzerland. Orders are encrypted and routed to the SecureDNA servers. There, an automated system looks for matches between the order and the database. From initial tests, the SecureDNA team reports in a recent paper, the model is difficult to fool, and the researchers predict it will rarely produce false alarms. (The team plans to submit the paper to peer review after testing SecureDNA on more real-world orders.)

Whether companies will actually get on board remains unclear. In order to maximize security, the system is a bit of a black box. No company so far has committed to turning over its screening process to SecureDNA, Esvelt said, although some companies have agreed to test it.

Twist Bioscience, whose head of biosecurity currently chairs the board of the IGSC, creates made-to-order DNA with a silicon-based DNA synthesis platform. Their technology can generate nearly 10,000 genes at a time.

Visual: Twist Bioscience/YouTube

In 2020, Yassif, at the Nuclear Threat Initiative, began developing a different screening tool in partnership with the World Economic Forum and an advisory panel of experts. Called the Common Mechanism for DNA Synthesis Screening and slated to launch in 2023 under the auspices of a new international organization, the tool will be distributed to companies, who can then use the software to search orders for potential red flags.

“The basic idea is, if we give a tool to companies to make it cheaper and easier to do the right thing, then it’s going to be very appealing for them to just take it,” said Yassif.

Government officials are also moving to encourage more robust screening. Two years ago, the U.S. Department of Health and Human Services began the process of updating its 2010 guidelines, which experts say have become dated. The new guidelines are slated to come out in 2023. Among other changes, they are likely to ask companies to start screening orders for shorter pieces of DNA, rather than just focusing on orders for longer stretches of genetic material. (The goal is to prevent people from buying many short pieces of DNA and then stringing them together into something high-risk.)

In August 2022, California Gov. Gavin Newsom signed into effect a bill requiring the California State University system to only buy synthetic DNA from companies that screen their orders, and requesting that the University of California system do the same.

“This is the first legal requirement in the U.S. for a user of synthetic DNA to pay attention to the security safeguards that are in place for what they’re ordering,” said Koblentz, the George Mason University expert, who consulted on the bill.

Ultimately, Koblentz said, the federal government should do more to incentivize good screening. For example, major federal science funders could give grants on the condition that institutions buy their DNA from more secure providers, using their market power, he said, “to require researchers to use biosecurity safeguards.”

So far, there appear to be no plans to apply those kinds of incentives. “Adherence to the revised guidance, like adherence to the 2010 guidance, is voluntary,” wrote Matthew Sharkey, a federal scientist working the new guidelines, in an email. And, he added, “no federal agency currently requires compliance with them as a condition for research funding.”

Amid other pressing global concerns, biosecurity experts have sometimes struggled to draw attention to the issue. A 2020 essay Koblentz wrote for The Bulletin of the Atomic Scientists is titled “A biotech firm made a smallpox-like virus on purpose. Nobody seems to care.”

Recently, much of the urgency around synthetic DNA security has come from the effective altruism community: A loose-knit movement, centered in Silicon Valley, that aims to take a rational approach to doing the most good possible. Supporters often throw their energy behind pressing public health issues like malaria treatment, as well as more rarefied concerns, such as rogue artificial intelligence or governance in outer space.

The movement has grown in prominence in the past decade, becoming a major funder of initiatives to prevent human-caused pandemics. Two effective altruism groups, Effective Giving and Open Philanthropy, are underwriting Yassif’s project. Yassif used to work at Open Philanthropy, which is also backing SecureDNA. (In August, Esvelt told Undark that the group was in talks to receive funding from the FTX Future Fund, an effective altruism venture linked to the cryptocurrency exchange FTX. They did not finalize an arrangement, according to Esvelt, and FTX collapsed spectacularly in November amid allegations of misusing customer funds.)

With a new generation of startups bringing benchtop DNA printers to the market, like this one made by DNA Script, it’s only getting easier to manufacture DNA.

Visual: DNA Script/YouTube

Despite these concerns about bioterrorism, the risks remain largely theoretical. Leitenberg, the Maryland biosecurity scholar, began working on biological weapons issues in the 1960s. In a 2005 paper, he argued that people in the field often overstate the risks posed by bioterrorists.

As Leitenberg argues, the U.S. has spent billions of dollars in the past two decades preparing for a bioterrorism attack — but the threat, at least so far, has not materialized. “The real bioterrorists,” he said, “still haven’t made a single thing.” Lab accidents, he argues, pose a far greater risk than a rogue actor.

What’s clear is that the challenge of regulating the synthetic DNA industry is only compounding — in particular because it’s getting easier to manufacture DNA. If DNA synthesis companies are printshops, a new generation of startups are now making at-home printers: so-called benchtop machines, some retailing for under $100,000, that make it possible to custom-print DNA in the laboratory.

Both SecureDNA and the Common Mechanism hope to one day allow companies to incorporate security tools directly into benchtop devices, so that they can remotely block the production of certain sequences.

The new benchtop technology, said Yassif, has the potential to dramatically expand the circle of people with access to custom-made DNA. The technology, she cautioned, is still in its infancy. “I don’t think the sky is falling today,” Yassif said. But, she added, “I think it’s a wakeup call that we need to be thinking about this now, and building in security now.”

UPDATE: An earlier version of this article imprecisely described Japanese researcher Seiichi Endo as a virologist. While Endo had some graduate training in this area, he did not have an advanced degree in virology.