Forget Killer Robots: Autonomous Weapons Are Already Online

Earlier this year, concerns over the development of autonomous military systems — essentially AI-driven machinery capable of making battlefield decisions, including the selection of targets — were once again the center of attention at a United Nations meeting in Geneva. “Where is the line going to be drawn between human and machine decision-making?” Paul Scharre, director of the Technology and National Security Program at the Center for a New American Security in Washington, D.C., told Time magazine. “Are we going to be willing to delegate lethal authority to the machine?”

As worrying as that might seem, however, killer soldier-robots are largely the stuff of science fiction (for now) — quite unlike a much bigger threat that is already upon us: cyber weapons that can operate with a great deal of autonomy and have the potential to crash financial networks and disable power grids all on their own.

Since 2017, the United Nations Convention on Certain Conventional Weapons has held talks about regulating autonomous weapons — those which can identify and engage targets without human intervention. Contrary to popular belief, this ability doesn’t necessarily require advanced artificial intelligence. In fact, militaries already use some semi-autonomous or human-supervised autonomous weapons, though so far only in limited battlefield scenarios, where generally decision-making and targeting — such as automatically detecting and shooting down enemy missiles — are simpler.

Physical weapons with certain autonomous capabilities, such as air and missile defense systems, have been used for decades by countries including the United States, South Korea, and Israel. The first fully autonomous weapon saw military service in the 1980s, when the U.S. Navy temporarily deployed an anti-ship missile that could hunt and attack ships on its own. Many of the tools currently in use function semi-independently, like robotic machine guns, which can operate solo but still have human supervisors capable of overriding their decisions.

But this focus on physical autonomous weapons has glossed over existing cyber weapons: malware designed to attack crucial computer-controlled systems, potentially causing widespread havoc well beyond combat zones. Autonomy has grown far more rapidly in cyberspace.

“Cyber systems have been autonomous since the first computer worm copied itself and spread across different computer drives,” says Scharre, a former U.S. Army Ranger who built much of his post-military career analyzing the military’s use of robotic weapons and artificial intelligence. Scharre notes that autonomy in cyberspace is harder to grapple with than in physical weapons, and “also more urgent.” Among all the potential threats, Scharre describes cyber weapons as the most frightening part of his new book, “Army of None: Autonomous Weapons and the Future of War.”

Today, most people have a passing understanding of what a bad guy with an internet connection can do. But you might be surprised how much of this kind of activity happens without direct human supervision. “Malicious computer programs that could be described as ‘intelligent autonomous agents’ are what steal people’s data, build bot-nets, lock people out of their systems until they pay ransom, and do most of the other work of cyber criminals,” says Scott Borg, director and chief economist of the U.S. Cyber Consequences Unit, a nonprofit research institute.

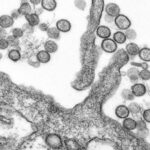

The damage done by such programs is not restricted to credit card stealing — cyber attacks also have the potential to inflict fairly widespread damage to physical infrastructure. In 2008, for example, the Stuxnet worm developed by U.S. and Israeli experts caused centrifuge equipment in Iran’s nuclear enrichment facilities to spin out of control. During the past several years, hackers suspected to be affiliated with Russia have shut down portions of the power grid in Ukraine at key periods of political tension and open military conflict. And in 2017, North Korea was alleged to have unleashed the WannaCry ransomware worm that infected computers in dozens of countries and shut down access to hospital computers in the U.K.’s National Health Service, forcing some facilities to turn away non-critical patients or divert ambulances.

In the last decade, security researchers have demonstrated that basically any device with a digital component — including heart implants and modern cars — can be targeted. Societal vulnerability to cyber attacks will likely only increase as smart-home devices, and eventually self-driving car technologies, become widespread. Attackers no longer need access to military long-range aircraft or missiles to threaten the homeland — they only need a computer and internet access. It’s not a stretch to imagine the risk such attacks present for human lives.

“We’re mostly doing things like using cyber weapons of various kinds without an overview of where this is going, what the precedents are, and how we should be doing this,” Borg said. “We’re not having public discussions of the sort that would provide a foundation for consensus policies.”

The disconnect between the perceived urgency to ban “killer robots” and the lack of alarm over cyber weapons prompted Kenneth Anderson, a professor of law at American University who specializes in national security, to publish a 2016 paper in the Temple International and Comparative Law Journal. Anderson points out that cyber weapons could likely cause far greater destruction than “killer robot” technologies. Compared to military hardware, cyber weapons are relatively cheap to produce or acquire — and their self-replication abilities foster the potential for uncontrollable and dangerous situations.

Given their danger, Anderson asks: “Where is the ‘Ban Killer Apps’ NGO advocacy campaign, demanding a sweeping, total ban on the use, possession, transfer, or development of cyber weapons — all the features found in today’s Stop Killer Robots campaign?”

To date, governments and international organizations have still not agreed on who should be allowed to use autonomous cyber programs, or for what purposes. “In practice, we already see a universe of malware — much of it quite autonomous, although not quite intelligent — attacking an endless number of innocent targets,” says Alexander Kott, chief of the Network Science Division of the U.S. Army Research Laboratory.

Of course, not all autonomous cyber programs would be offensive — some might be designed to protect weapons or infrastructure from attack. “The U.S. Department of Defense policy imposes very strong restrictions on autonomous and semi-autonomous weapon systems, and requires them to remain under meaningful human control,” Kott says. “It is not clear whether a cyber-defense software would fall into this category — although it probably should, if an error of an autonomous agent can cause threatening consequences.” Kott takes a practical better-safe-than-sorry stance; he’s actually a co-author of a 2018 North Atlantic Treaty Organization (NATO) research group report envisioning a future where an autonomous agent defends NATO computer networks and combat vehicles from enemy malware.

The United States isn’t the only one questioning how to regulate autonomous cyber weapons programs, although too often, there’s been a tendency for these conversations to fall back into discussing hardware. In 2013, for example, the United Nations Human Rights Council Special Rapporteur released a report about “lethal autonomous robotics” where it declared “robots should not have the power of life and death over human beings.” The report also recommended that countries temporarily halt some related technological development.

The report sparked interest in the Human Rights Council, and then within the framework of the Convention on Certain Conventional Weapons, a group that typically deals with physical weapons like mines and explosive devices. But the talks established an early emphasis on robotic hardware, rather than the dangers of cyberspace.

“Most people are thinking about autonomous weapons as physical weapon systems,” says Kerstin Vignard, deputy director for the United Nations Institute for Disarmament Research (UNIDIR). “Yet, for the most part, increasing autonomy — in cyber capabilities and in conventional weapons — is intangible. It depends on code.” By isolating these discussions, organizations risk overlooking how physical weapons and cyber weapons could soon directly impact one another.

One significant overlap in cyber and physical battlefields comes from operations using cyber weapons to disable or even take control of more traditional military weapons. According to UNIDIR, computer-controlled missile defense systems, drones, or robotic tanks could be vulnerable to cyber attacks — imagine the perils of shutting down communications or power supply at a critical moment in battle. A more insidious attack might cause autonomous weapons to misidentify friendly forces as enemy targets. Hackers could even take full control of autonomous weapons and turn them against their owners.

Complicating the issue is the fact that deep learning — the most popular machine learning technique currently in use for artificial intelligence applications — is, from a human perspective, like a black box. Because of the complex ways it processes data to make decisions, it’s difficult for human soldiers to tell if their autonomous military robots or drones are working properly. Even the best AI researchers usually have no way of fully understanding how deep learning algorithms come up with their results.

All these reasons can make military robots particularly attractive targets for cyber weapons. It’s too easy to see how eagerness to counter the physical autonomous weapons of rival militaries might escalate into a cyber arms race, involving both offensive and defensive operations.

Future autonomous weapons could be dangerous even through unintended interactions, as the software of different systems collide. Software algorithms without much autonomy have already demonstrated this risk through various recent stock market “flash crash” incidents. Software interactions involving autonomous weapon systems could spiral out of control just as quickly, before humans could intervene — and with potentially far deadlier consequences.

In 2015, UNIDIR held a conference to discuss the dangers of cyber weapons. But despite such periodic meetings, there hasn’t been serious talk among governments about banning these types of weapons — or the practical ability of the U.N. to enforce such a ban. Nor is there much appetite for restricting access to the latest artificial intelligence techniques, the way nuclear weapons technology has been internationally controlled.

In the absence of international guidelines, it’s been left to individual companies and researchers to decide their approach. The American tech giant Microsoft proposed a Digital Geneva Convention in 2017, calling for an international agreement on restraining the use of cyber weapons and cyber offensive operations. And earlier this year, Microsoft floated the idea for a new international organization that could focus specifically on cyberspace and cybersecurity issues — though the company’s Digital Geneva Convention has so far failed to get Silicon Valley rivals like Amazon, Apple, and Google to sign on.

Google may be weaving its own path through the ethical minefields involving physical and autonomous cyber agents. On June 7, the tech giant published a set of company principles that ruled out developing AI for weapons and technologies intended to cause or facilitate direct harm to people — but it retained the option to work with governments and the military on developing AI applications for cybersecurity.

In the meantime, Vignard and other experts say there are at least benefits to making governmental representatives and military commanders more aware of the interactions between cyber and physical weapons when discussing autonomous systems. After all, leaders may demonstrate extra caution in deploying autonomous weapons if they know such weapons could easily fall under enemy control. As Scharre says, “Unless you can secure them, it’s kind of crazy to delegate a lot of autonomy to a lethal military system.”

Jeremy Hsu is a freelance journalist based in New York City. He frequently writes about science and technology for Backchannel, IEEE Spectrum, Popular Science, and Scientific American, among other publications.

Comments are automatically closed one year after article publication. Archived comments are below.

“Matrix’s”, “Terminator’s” and, very lately, “Allien Covenant” – just Hollywood paraphernalia? – already foresaw this possible future. If we can imagine it, it’s because it’s possible.

I believe it’s something we will have to live with because War Budgets – whose main goal is to prevent War itself, al least in the line of Sun Tzu teachings – need cost-efficient solutions. Just think on the life savings of GPS guided armament vs. traditional conventional bombing…

Machines – a new world for Tools – pervade our world, more and more. Maybe we can teach machines to learn Sun Tzu teachings in what regards to War…or, maybe, maybe, Emotions and Love like in “Blade Runner”‘s.

Pandora Box was opened a very long time ago. Now let’s do the best we can with courage and boldness.

Artificially intelligent military battle offensive terminal/terminator. aka AIMBOT.