At an international conference on kidney transplantation in 1963, a disagreement broke out about exactly when a patient should be considered dead enough to become an organ donor. One doctor stood up, angrily declaring that he was not “going to just wait around for the medical examiner to declare the patient dead. I’m just going to take the organ.” The sentiment wasn’t as shocking as it sounds; there weren’t any solid criteria for brain death at the time, and many doctors were asking why they should wait on the dead and dying to save the living.

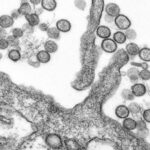

Medicine has long been shadowed by the specter of the resurrection men who dug up and raided recently buried coffins in the dead of night to supply 19th century anatomists with objects for study. The need for grave robbers had largely been obviated by body donation programs when, in 1954, the first successful kidney transplant at the Peter Bent Brigham Hospital in Boston kicked off the race to transplant other organs. And physicians weren’t willing to confine themselves to those like the kidney that the human body has in duplicate. They wanted to transplant the heart. And that, of course, requires a donor who will not survive the surgery. The age of resurrection men might well have been over, but the age of what we might call harvest men had only begun.

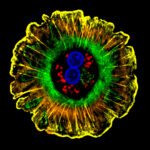

When are you dead enough to donate your organs? It sounds like an easy question to answer, but there is still no single, simply-applied medical definition of that curious and ephemeral moment. Before the advent of life support, death usually resulted from a halt in respiration or a stopped heart. By the mid-1950s, however, artificial respiration was possible through the use of machines that filled the lungs with air, oxygenating the blood and thereby keeping the brain and heart working on. What, then, was a physician to make of a patient with fixed pupils, no reflexes, and no autonomous breathing who still, mechanically, drew breath? Dead? Dead enough? Even with the advent of technology that could detect brain activity, or electroencephalography (EEG), physicians of the ‘50s and ‘60s were in uncharted territory. It wasn’t clear if a person needed EEG activity to be considered alive, and even some patients already determined to be brain-dead still had occasional blips.

The problem went from philosophical to actual when, on Jan. 2, 1968, at a hospital in Cape Town, South Africa, a surgeon named Christiaan Barnard took the heart from a 24-year-old Black man named Clive Haupt and placed it in the chest cavity of Philip Blaiberg, a White dentist with chronic heart disease.

Haupt had been bathing in the sea while on a family picnic when he suddenly suffered a subarachnoid hemorrhage resulting in bleeding in the brain. He was admitted to the hospital and placed in the care of Raymond Hoffenberg, the physician on duty at the time. That night, Hoffenberg had a visit from the hospital’s transplant team, who asked him to confirm that the patient was dead. Hoffenberg refused. Something was still going on inside Haupt’s head, activity Hoffenberg called elicitable neurological reflexes. “What sort of heart are you going to give us?” Hoffenberg recalled the head of surgery asking him, flanked by an eager Barnard. Their patient, Blaiberg, was in greater danger of death with every passing minute. They wanted the heart out as soon as possible, and Hoffenberg, fearful of undermining Barnard, agreed to declare Haupt dead by the next morning.

With his new heart, Blaiberg lived for 18 more months, became a media sensation, and paved the way for the future harvest of organs from what are called beating heart donors, that is, living bodies with allegedly dead brains. This, despite the fact that no one could yet agree about what brain death really meant.

While newspapers in apartheid South Africa announced Barnard’s surgical success, some journalists pointed out that Haupt’s heart would now be permitted to go places his body could not. News of Barnard’s heart transplant was also met by the Black press in the United States with trepidation. The Afro-American, a weekly newspaper in Baltimore, warned that doctors might start taking the organs of any Black patient, whatever their ailment.

A few months later, that scenario seemed to play out in Virginia when a factory worker named Bruce Tucker suffered a head injury, was declared unclaimed dead, and had his heart removed, all in the space of 24 hours.

Tucker’s family sued, and the ensuing case established a first legal definition of brain death. The initially skeptical judge was persuaded by testimony from the ad hoc committee at Harvard Medical School, which had been formed to craft a medical definition of brain death that very same year: When a patient falls into a permanent vegetative state — marked by coma, a lack of independent breathing, and “irreversible loss of all functions of the brain” — they are considered brain-dead, even if their heart still beats. And if they are brain-dead, the jury in the Tucker case ultimately decided, they are also harvestable; their organs may be taken, with consent. The Tuckers argued that the doctors did not allow them enough time to respond to inquiries before making their declaration and taking their quarry, but the family ultimately lost in court.

The decision favored the doctors, and by doing so, also offered the first precedent where brain death was death in the eyes of the law. While medicine continues to follow this legal precedent, the field of organ transplantation is struggling with a different kind of ethical tangle today.

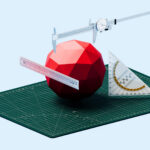

Sometimes called the Red Market, the illicit trade of human organs and tissues has become a lucrative business. Kidneys are especially commodifiable, as the donors can live after surgery. In 2010, the company behind St. Augustine’s Hospital in Durban, South Africa, paid out a large settlement in a cash for kidneys scheme; convictions for similar practices were won against doctors at a Kosovo facility around the same time. In 2018, six physicians were arrested in China’s Anhui province for illegally selling organs of accident victims, and an independent tribunal reported to the United Nations Human Rights Council that the Chinese government harvested organs from religious and ethnic minorities on an “industrial scale.”

Much has changed since the first hearts and kidneys were harvested to save lives. Organ transplantation and donation have become safe, effective, and mainstream, a modern miracle we scarcely think of beyond the checkbox on our driver’s license application. What has not changed is the sense of urgency. In 1963, when a doctor stood up to declare he would not wait on a medical examiner’s declaration of death, only a handful of transplants had even been attempted. In 2020, nearly 40,000 transplants were performed in the U.S. alone and more than 100,000 Americans currently have their names on transplant waiting lists. Transplant tourism, whereby ill patients travel to countries with less-stringent regulations to purchase hard-to-source organs, is on the rise.

The ethics are, naturally, complicated. While we justly condemn a traffic in bodies, we are no longer shocked by the removal of organs, even beating hearts, from brain-dead victims. This is medical progress; we can now save lives that once would be forfeit. The medical and legal criteria for brain death may have remained largely consistent since the 1960s, but our cultural expectations have changed. Our medical institutions are held to the highest standards of ethics, but faced with the impending death of a parent or child, how much might we as individuals be willing to overlook?

The harvest continues.

Brandy Schillace, Ph.D., is a historian, author, and editor in chief of BMJ’s Medical Humanities journal. Schillace’s nonfiction books include “Mr. Humble and Dr. Butcher,” “Death’s Summer Coat,” and “Clockwork Futures.”

Comments are automatically closed one year after article publication. Archived comments are below.

Beware the markets created by the scientific advancements in organ transplantation. The surgeons, the pharmaceutical companies, the health care providers all want to profit from the limited supply of organs made available by the dying for the desperately diseased who are adequately insured or wealthy. Black markets already exist for human organs and the medical professionals who participate in them have demonstrated their disregard for the ‘donors’ in their pursuit of income.

Transplant technology should be abandoned as an unethical medical treatment. Organs available for transplant are not plentiful and the technology has made them precious commodities coveted by those willing to exploit the poor and dying to supply them to a wealthy class desperate to live at any cost. Medicine should find transplantation unethical and forbid it, but it has been perverted by market values which supplant medical ethics.

What crude language you use! “Harvest” as though these were crops in a field. Surely as a ethicist, you understand the loaded nature of such language. There s a critical shortage of organs for transplantation in the world today and one day, you, our your loved one, might be on the waiting list. Show a little humanity and soften you gut-wrenching language.