The Replication Crisis Is My Crisis

Sometimes I wonder if I should be fixing myself more to drink. You see, I’m a social psychologist by training — a Professor of Psychology at the University of Toronto — and my chosen vocation has come unglued.

I say this because not a day goes by when I do not hear about one or another of our cherished findings falling into disrepute. A few months ago, an ambitious project aiming to reproduce and verify 100 published experimental findings across psychology found that only about a third could be replicated. The bad news is that this actually flatters my cherished sub-discipline of social psychology, where only a quarter of findings could be replicated. Some prominent scientists have criticized this project, but such critiques have mostly gone unheeded because of the many other sources of evidence that all is not right in the state of social psychology.

One after another, landmark concepts in the field have proven unsupported or irreproducible. These include, for example, the idea that power posing can influence levels of circulating hormones and boost confidence, and the notion that reminding people about money can influence their opinions or behavior. Studies showing that the administration of oxytocin — the so-called love drug — can increase trust in humans have come under intense scrutiny, as has the idea that moral misdeeds lead people to wash their hands more frequently, as if to wash away their sins.

Taken individually, none of these might be terribly upsetting. Together, they make it clear: Our problems are not small and they will not be remedied by small fixes. Our problems are systemic and they are at the core of how we conduct our science.

My eyes were first opened to this possibility when I read a paper by Simmons, Nelson, and Simonsohn during what now seems like a different, more innocent time. This paper details how small, seemingly innocuous, and previously encouraged data-analysis decisions could allow for anything to be presented as statistically significant. That is to say, flexibility in data collection and analysis could make even impossible effects seem possible and significant.

What is worse, Andrew Gelman, director of the Applied Statistics Center at Columbia University, has made clear that a researcher need not actively and unscrupulously hack his or her data to reach erroneous conclusions. It turns out such biases in data analyses might not be conscious, and that researchers might not even be aware of how their data-contingent decisions are warping the conclusions they reach. This is flat-out scary: Even honest researchers with the highest of integrity might be reaching erroneous conclusions at an alarming rate.

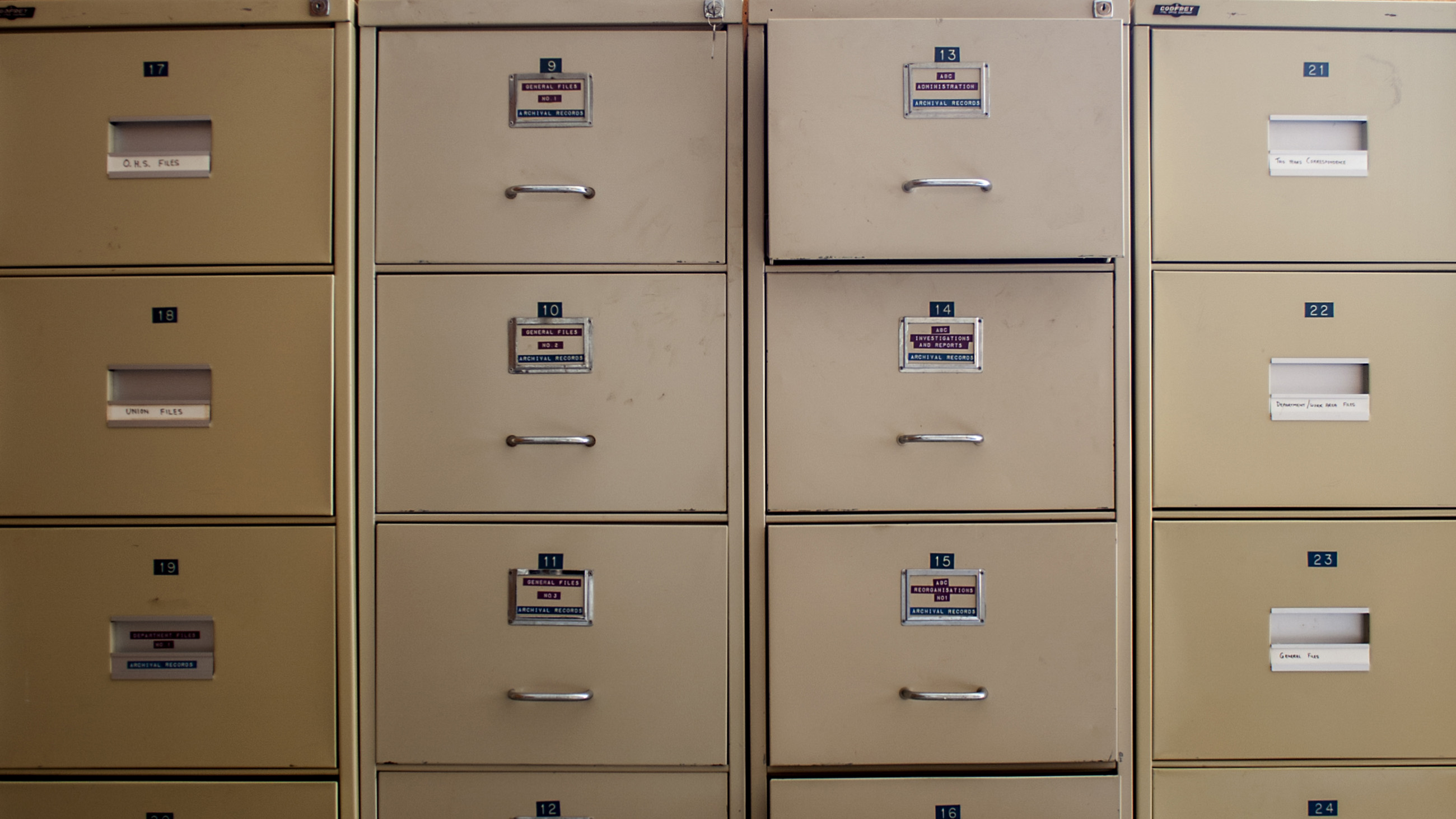

Perhaps the most serious problem, though, is the one of publication bias. As a field, we tend only to publish significant results. This could be because as authors we choose to focus on these. More likely, though, it’s because reviewers, editors, and journals force us to focus on these and to ignore null results. This creates the infamous file drawer effect, in which studies with negative outcomes are tucked away from public view. We have no idea how big or deep that file drawer really is, which makes it nearly impossible to know whether the research that does get published is well supported.

I think these three ideas — that data flexibility can lead to a raft of false positives; that this process might occur without researchers themselves being aware; and that the size of that negative-results file drawer is unknown — suggest that we might have been fooling ourselves into thinking we were chasing things that are real and robust, when we were, in fact, chasing puffs of smoke.

As someone who has been doing research for nearly 20 years, I now can’t help but wonder if the topics I chose to study are in fact real and robust. Have I been chasing puffs of smoke for all these years?

I have spent nearly a decade working on the concept of ego depletion, for example, which holds that we have a limited reservoir of energy for exercising self-control and other feats of mental discipline. If you use that energy supply suppressing your desire to smoke cigarettes now, you’ll be more likely to break down and eat that last piece of pie later on in the day.

This idea has been massively influential in the field of social psychology, apparently confirmed by many hundreds of studies. It is so well known, in fact, that President Obama is aware of it, and changes his behaviors to benefit from this knowledge.

My research has included work that is critical of the model used to explain ego depletion, and I have been rewarded well for it. I am convinced that the main reason I get any invitations to speak at colloquia and brown-bags these days is because of this work.

The problem is that ego depletion might not even be a thing. By now, people are becoming aware that a massive pre-registered replication attempt of the basic ego depletion effect involving over 2,000 participants found nothing. Nada. Zip. Only three of the 24 participating labs found a significant effect, and even then, one of these found a significant result in the wrong direction!

There is, of course, a lot more to this replication effort than the main headlines reveal, and there is still so much evidence indicating ego fatigue is a real phenomenon. But for now, we are left with a sobering question: If a large-sample study found absolutely nothing, how has the ego depletion effect been replicated and extended hundreds and hundreds of times? More sobering still: What other phenomena, which we now consider obviously real and true, will be revealed to be just as fragile?

Stereotype threat, for example, was another of the most widely studied topics in social psychology. It describes a phenomenon in which people either are, or consider themselves to be, at risk of confirming negative stereotypes about the social group to which they belong — an awareness that can impact performance on tests and in jobs and myriad other situations. Since its inception in 1995, the concept has been a favorite among scientists with a social-justice bent because it offers situational explanations for things like the gender gap in science and math achievement and participation.

Stereotype threat was the focus of my dissertation research. I edited an entire book on the topic. I have signed my name to an amicus brief to the Supreme Court of the United States citing it.

Yet now I am not as certain as I once was about the robustness of this basic effect.

I feel like a traitor for having just written that, or like I’ve disrespected my parents — a no-no, according to Commandment number five. But, a meta-analysis published just last year suggests that stereotype threat, at least for some populations and under some conditions, might not be so robust after all. Conducting bias-tests of the original papers is also not comforting.

To be sure, stereotype threat is a politically charged topic and there is still a lot of evidence supporting it. I think a lot more painstaking work needs to be done before I have serious reservations, and rumor has it that another massive effort at replication of stereotype threat is now in the works.

But I would be lying if I said that doubts have not crept in. All told, I feel like the ground is moving from underneath me, and I no longer know what is real and what is not.

To be fair, this is not social psychology’s problem alone. Many other allied areas in psychology might be similarly fraught and I look forward to these other areas scrutinizing their own work — areas like developmental, clinical, industrial/organizational, consumer behavior, organizational behavior, and so on, need reviews of their own. Other areas of science, like cancer medicine and economics, face similar problems, too.

But during my darkest moments, I feel like social psychology needs a redo, a fresh start. The question is, where to begin? What am I mostly certain about, and when should I be more skeptical? I feel like there are legitimate things we have learned, but how do we separate wheat from chaff? What should I stop teaching to my undergraduates, and do we need to go back and meticulously replicate everything in the past?

I don’t have answers to any of these questions, but I do have some hope: I legitimately think our problems are solvable. I think the calls for more statistical power, greater transparency surrounding null results, and more confirmatory studies can save us.

But what is not helping is the lack of acknowledgement about the severity of our problems, and the reluctance to dig into our previous work and ask what needs revisiting.

The time is nigh to reckon with our past. Our future just might depend on it.

Michael Inzlicht is a Professor of Psychology, and cross-appointed with the School of Public Policy and Governance, and the Rotman School of Management, all at the University of Toronto. A version of this essay originally appeared on his personal blog, called “Getting Better: Random musings about science, psychology, and what-have-yous.” The views expressed here are his own.