In poll after poll, Americans say they are deeply concerned about rising incivility online. And extensive social media research has focused on how to counteract online incivility. But with Civic Signals, a project of the National Conference on Citizenship and the Center for Media Engagement, researchers took a different approach: If you started from scratch, they asked, what would a flourishing, healthy digital space look like?

They quickly realized that it wouldn’t always be civil.

The Civic Signals project, which began about four years ago, initially involved conducting a thorough literature review and expert interviews in the U.S. and four other countries to identify the values — or “signals” — people want reflected in the design of online spaces. The team then conducted focus groups and polled more than 22,000 people in 20 countries who were frequent users of social, search, and messaging platforms. Gina Masullo, a professor in the School of Journalism and Media at the University of Texas at Austin, brought an expertise in incivility research to the group. But “pretty early on in the process,” she said, the team concluded that if one of the goals was to support productive political discourse, civility alone was insufficient.

“It’s not really that we are advocating for incivility,” said Masullo. “But if you are going to have passionate discussion about politics, which we want in a democracy, I would argue, people are not always going to talk perfectly about it.” In her book “Nasty Talk: Online Incivility and Public Debate,” she points out that “perfect” speech can be so sanitized that we wind up saying nothing.

No one is arguing that social media companies shouldn’t combat the most harmful forms of speech — violent threats, targeted harassment, racism, incitement to violence. But the artificial intelligence programs that the companies use for screening, trained using squishy and arguably naive notions of civility, miss some of the worst forms of hate. For example, research led by Libby Hemphill, a professor in the University of Michigan’s School of Information and the Institute for Social Research, demonstrated how white supremacists evade moderation by donning a cloak of superficial politeness.

“We need to understand more than just civility to understand the spread of hatred,” she said.

Even if platforms get better at hate Whac-A-Mole, if the goal is not just to profit, but also to create a digital space for productive discourse, they will need to retool how algorithms prioritize content. Research suggests that companies incentivize posts that elicit strong emotion, especially anger and outrage, because, like a wreck on the highway, these draw attention, and, crucially, more eyeballs to paid advertising. Engagement-hungry folks have upped their game accordingly, creating the toxicity that has social media users so concerned.

What people really want, the Civic Signals project found, is a digital space where they feel welcome, connected, informed, and empowered to act on the issues that affect them. In a social media world optimized for clicks, such positive experiences happen almost despite the environment’s design, said Masullo. “Obviously, there’s nothing wrong with making money for the platforms,” she said. “But maybe you can do both, like you could also make money but as well not destroy democracy.”

As toxic as political discourse has become, it seems almost quaint that a little over a decade ago, many social scientists were hopeful that by allowing political leaders and citizens to talk directly to one another, nascent social media platforms would improve a relationship tarnished by distrust. That directness, said Yannis Theocharis, Professor of Digital Governance at the Technical University of Munich “was something that made people optimistic, like me, and think that this is exactly what’s going to refresh our understanding of democracy and democratic participation.”

So, what happened?

Social media brought politicians and their constituents together to some extent, said Theocharis, but it also gave voice to people on the margins whose intent is to vent or attack. Human nature being what it is, we tend to gravitate towards the sensational. “Louder people usually tend to get a lot of attention on social media,” said Theocharis. His research suggests that people respond more positively to information when it has a bit of a nasty edge, especially if it jibes with their political views.

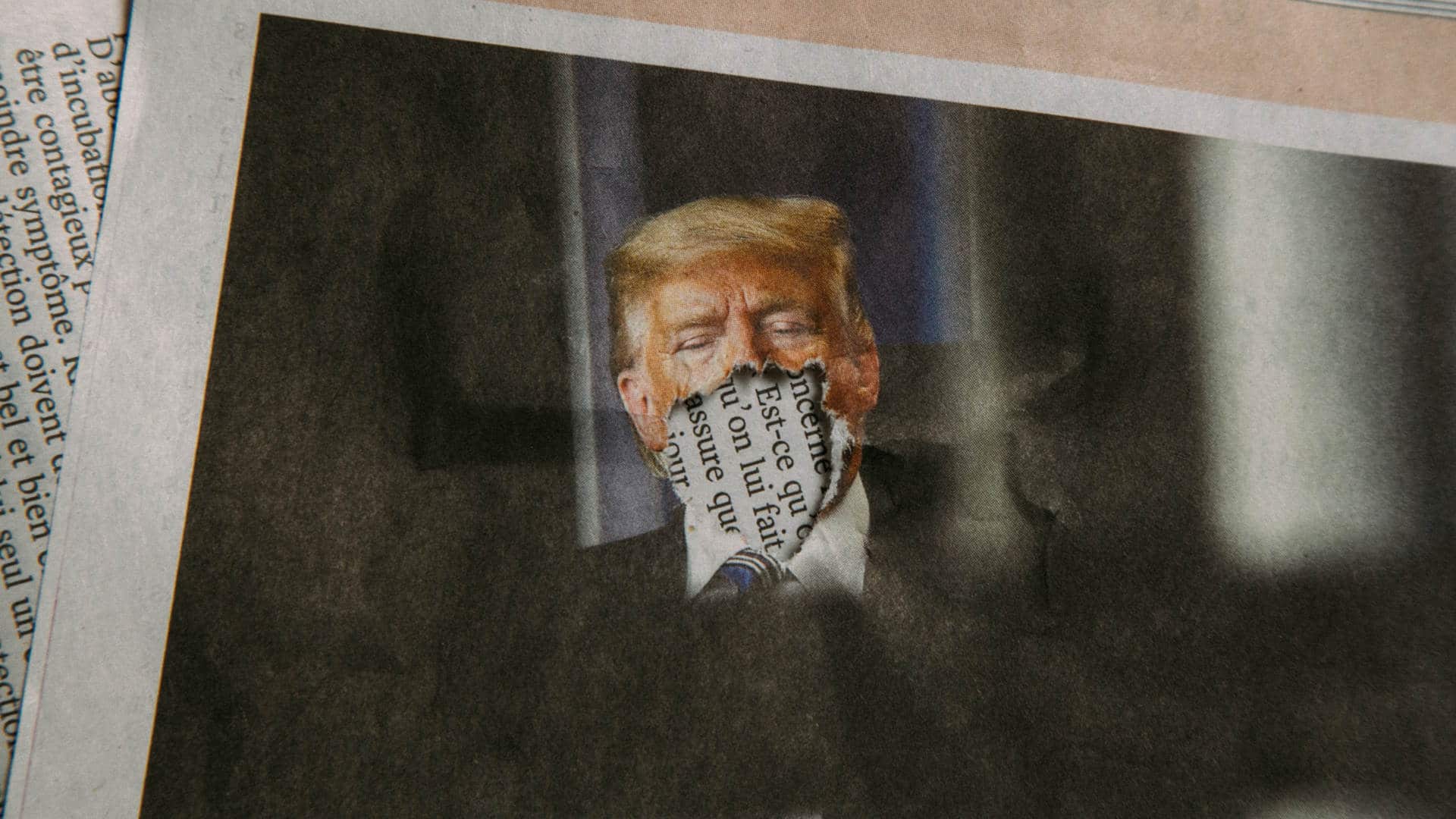

And politicians have grown savvy to the rules of game. Since 2009, tweets by members of the U.S. Congress have become increasingly uncivil according to an April study that used artificial intelligence to analyze 1.3 million posts. Results also revealed a plausible reason why: Nastiness pays. The rudest, most disrespectful tweets garner eight times as many likes and ten times as many retweets as civil ones.

By and large, social media users don’t approve of the uncivil posts, the researchers found, but pass them along for entertainment value. Jonathan Haidt, social psychologist at the New York University Stern School of Business, has noted that the simple design choice about a decade ago to “like” and “share” features changed the way that people provide social feedback to one another. “The newly tweaked platforms were almost perfectly designed to bring out our most moralistic and least reflective selves,” he wrote this past May in The Atlantic. “The volume of outrage was shocking.”

One solution to rising incivility is to run platforms like a fifth-grade classroom and force everyone to be nice. But enforcing civility in the digital public square is a fool’s errand, Masullo and her Civic Signals colleagues argue in a commentary published in the journal Social Media + Society in 2019. For starters, incivility turns out to be really hard to define. Social scientists use standardized artificial intelligence programs trained by humans to classify speech as uncivil based on factors such as profanity, hate speech, ALL CAPS, name calling, or humiliation. But those tools aren’t nuanced enough to moderate speech in the real world.

Profanity is the easiest way to define incivility because you can just create a search for certain words, said Masullo. But only a small percentage of potentially uncivil language contains profanity, and, she added, “sexist or homophobic or racist speech is way worse than dropping an F bomb here and there.”

Plus, heated conversations aren’t necessarily bad, said Masullo. “In a democracy you want people to discuss things,” she said. “Sometimes they’re going to dip into, maybe, some incivility and you don’t want to chill robust debate at the risk of making it sanitized.” Finally, she said, when you focus on civility as the end goal, it tends to privilege those in power who get to define what’s “appropriate.”

Furthermore, civility policing arguably isn’t working particularly well. Hemphill’s research as a Belfer Fellow for the Anti-Defamation League shows that moderation algorithms miss some of the worst forms of hate. Because hate speech represents such a small fraction of the vast amount of language online, machine learning systems trained on large samples of general speech typically don’t recognize it. To get around that problem, Hemphill and her team trained algorithms on posts from the far-right white-nationalist website Stormfront, comparing it to alt-right posts on Twitter and a compendium of discussions on Reddit.

In her report Very Fine People, Hemphill details findings showing that platforms frequently overlook discussions of conspiracy theories about white genocide and malicious grievances against Jews and people of color. White supremacists evade moderation by avoiding profanity or direct attacks — but use distinctive speech to signal their identity to others in ways that are apparent to humans, if not algorithms. They center their whiteness by appending “white” to many terms such as “power” and dehumanize racial and ethnic groups by using plural nouns such as Blacks, Jews, and gays.

A civil rights audit of Facebook published in 2020 concluded that the company doesn’t do enough to remove organized hate. And last October, former Facebook product manager Frances Haughen testified before a U.S. Senate Committee that the company catches 3 to 5 percent of hateful content.

But Meta, the parent company of Facebook and Instagram, disagrees. In a statement forwarded by Meta’s policy communications manager Irma Palmer, which she asked to be attributed only to a “Meta spokesperson,” the company said that “in the last quarter alone, the prevalence of hate speech was at 0.02 percent on Facebook, down from 0.06-0.05 percent, or 6 to 5 views of hate speech per 10,000 views of content from the same quarter the year before.” Even so, the company admitted in a follow-up statement that it will inevitably make mistakes, so it continues to invest in refining its policies, enforcement, and the tools it gives users. The company is, for example, testing strategies such as granting administrators of Facebook Groups more latitude to consider context when deciding what is and isn’t allowed in their space.

Another solution to the problem of hate and harassment online is regulation. As I covered in a previous column, a handful of giant for-profit companies control the digital world. In a Los Angeles Times op-ed about the efforts of Elon Musk, Tesla CEO and world’s richest person, to purchase Twitter, Safiya Noble, professor of Gender Studies at the University of California in Los Angeles, and Rashad Robinson, president of the racial justice organization Color of Change, pointed out that a select few people control the technology companies that affect an untold number of lives and our democracy.

“The issue is not just that rich people have influence over the public square, it’s that they can dominate and control a wholly privatized square — they’ve created it, they own it, they shape it around how they can profit from it,” wrote Noble and Robinson. They advocate for regulations like those for the television and telecommunications industries that establish frameworks for fairness and accountability for harm.

In the absence of stricter laws, social media companies could do much more to create a space that allows people to speak their mind without devolving into harassment and hate.

In the Very Fine People report, Hemphill recommends several steps that companies could take to reduce hate speech on their platforms. First, they could consistently and transparently enforce existing rules. A broad swath of the civil rights community has criticized Facebook for not enforcing policies against hate speech, especially content targeted at African Americans, Jews, and Muslims.

Social media companies may take an economic hit and even face legal challenges when they don’t allow far-right extremists to speak, Hemphill acknowledges. Texas state law HB 20 would have made it nearly impossible for social media companies to ban toxic content and misinformation. But the U.S. Supreme Court recently put that law on hold while lawsuits against the legislation work their way through the courts. If the Texas law is overturned, going forward, platforms could argue more forcefully for their own rights to moderate speech.

In the wake of the Citizens United Supreme Court ruling, which expanded corporations’ rights to free speech under the First Amendment, tech companies “can remind people that they have the right to do what they want on their platforms,” said Hemphill. “Once they do that, they can start to prioritize social health metrics instead of only eyeballs.”

Like Hemphill, many social scientists are making the case for platforms to create a healthier space by tweaking algorithms to de-emphasize potentially uncivil content. Companies already have tools to do this, said Theocharis. They can block the sharing of a post identified as uncivil or downgrade it in users’ feeds so that fewer will people see and share it. Or as Twitter has tried, they could nudge users to rethink posting something hurtful. Theocharis’ team is exploring whether such interventions work to reduce incivility.

The Civic Signals team recommends that companies focus on optimizing feeds for how valuable content is for users and not just clicks. If companies changed their algorithms to prioritize so-called connective posts — that is, posts that make an argument, even using strong language, without directly attacking other people — then uncivil posts would be seen less and, therefore, shared less and would eventually fade from view, said Masullo.

As for profit, Masullo pointed out that people are unhappy with the current social media environment. If you cleaned up a public park full of rotting garbage and dog poop, she said, more people would use it.

UPDATE: A previous version of this article included a paraphrased statement that was incorrectly attributed to Meta policy communications manager Irma Palmer. The statement and its attribution have been clarified.

Comments are automatically closed one year after article publication. Archived comments are below.

This presents a false dichotomy, that there are two sides that can just hash it out.

One side is presenting white christian nationalism, or rather a sanitized version of fascism.

The other side is saying people should have rights and dignity regardless of race, gender, sexuality or religion.

It’s not a debate when the other side is bent on exterminating or deporting you.

What is NOT mentioned but implied is that the online platforms not only do a terrible job of regulating hate speech. They are timid about suspending white nationalist accounts when flagged.

Not only this, but they often flag users for decrying violence or hate. Their algorithms are so bad.

Framing this as a debate between equally valid sides may be constructive on topics like taxes, drug policy, foreign policy etc.

But when it comes to the existential rights of marginalized groups, the arrow of history is clear.

This country started with a ruling class of white land owners and the right to vote and other universal rights has been expanded over time.

We no longer count anyone as 3/5th of a person. Or count them as a person for the census, but disenfranchise them based on genitalia.

The Right (it’s pointless to qualify that with adjectives like far, hard or extreme) poses existential threats to gay, trans, and immigrant people daily. They have passed laws in multiple states that criminalize having a uterus.

It’s not a debate. It’s creeping fascism.