There was a time, not that long ago, when I felt there was some hope of keeping up with developments in artificial intelligence. An article would appear in The New York Times or Scientific American, and I’d clip it out (yes, I’m old school) and tuck it in a file folder marked “AI.” But a few years ago, the breakthroughs started coming too quickly.

BOOK REVIEW — “Life 3.0: Being Human in the Age of Artificial Intelligence,” by Max Tegmark (Knopf, 364 pages).

As I type these words, I’m seeing articles on how AI will change our courtrooms (“Robot judges? Edmonton research crafting artificial intelligence for courts,” the headline reads); how AI will shake up policing; how AI can (allegedly) detect Alzheimer’s disease 10 years before symptoms appear; and, lest we become nervous about the pace of change, this headline from Mashable: “Everybody calm down about artificial intelligence” — all posted within a 24-hour period.

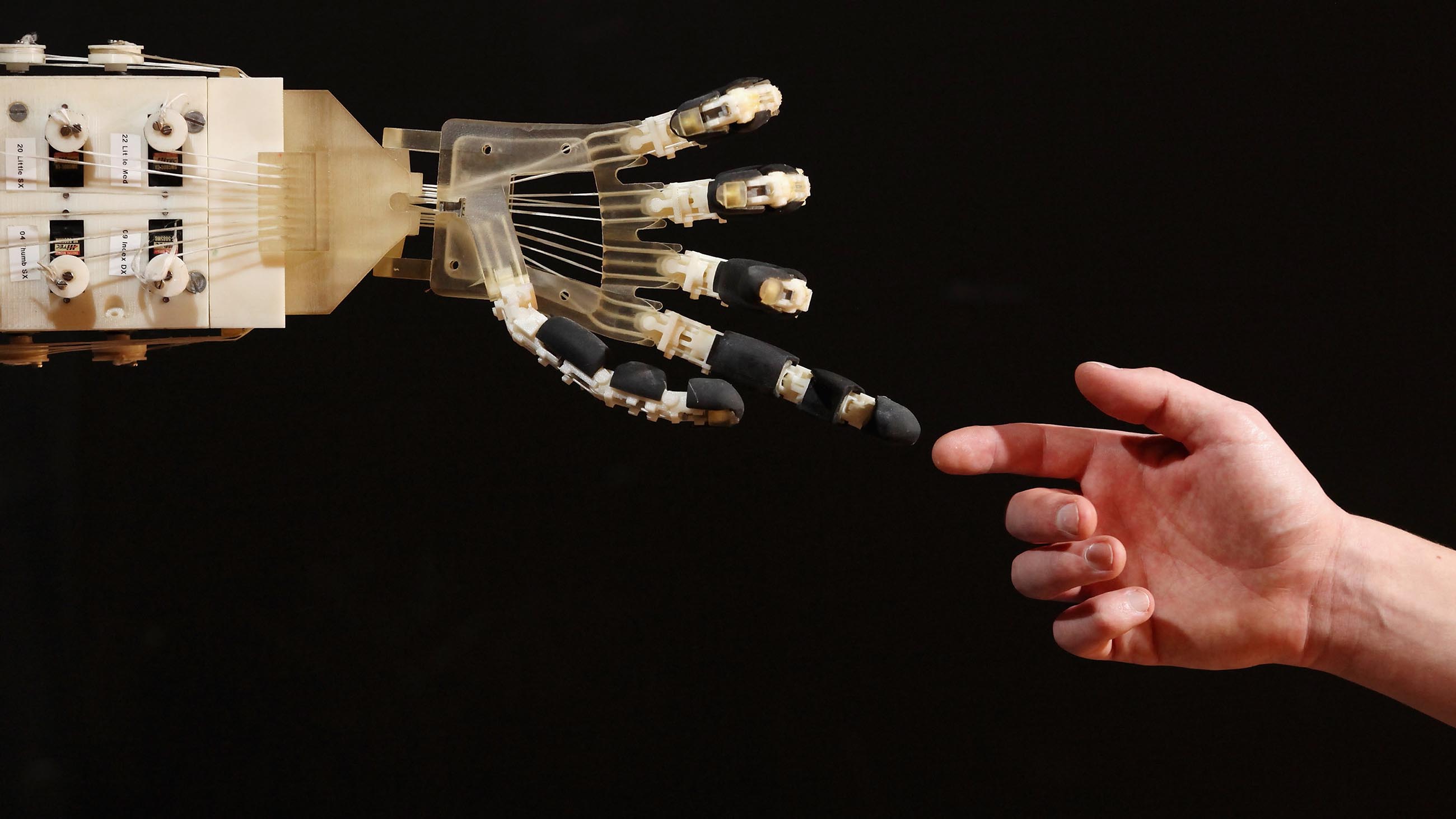

It’s no secret that artificial intelligence systems have already surpassed human capabilities in a multitude of fields — remember when the best chess, Go, poker, and “Jeopardy!” players were human? — and are quickly catching up in many others (driving cars and trucks, language translation, image recognition, diagnosing cancer, and predicting elections, to name a few).

And so we ask ourselves: Where is it all heading? Enter Max Tegmark, iconoclastic mop-topped MIT physicist and co-founder of the Future of Life Institute, whose mission statement includes “safeguarding life” and developing “positive ways for humanity to steer its own course considering new technologies and challenges.”

In his new book, “Life 3.0,” Tegmark focuses on the big picture — where AI is taking us and whether we should be worried — but he also examines some of these dizzying recent developments on their own terms. Take robot judges, for example. It’s often claimed that automating the judicial system would help to counter the known biases of human judges. While racial bias gets the most attention, even seemingly mundane factors can affect verdicts. (Tegmark cites an Israeli study reporting that judges deliver significantly harsher verdicts when they’re hungry; you really don’t want to be sentenced just before lunchtime.)

In principle, a robojudge could zoom through thousands of legal briefs and read up on whatever research might be relevant to a particular case (the latest forensic science papers, for example) in the blink of an eye — all at a fraction of the cost of paying a human judge. Tegmark writes that one day “robojudges may therefore be both more efficient and fairer, by virtue of being unbiased, competent, and transparent.”

Fortunately, he isn’t naïve enough to think it’s that simple. For starters, there’s increasing evidence that AI systems can incorporate human prejudices by absorbing whatever biases were lurking in the data they trained on. (For example, language-processing AI systems have been found to exhibit human-like sexism, linking the word “programmer” more strongly with the word “man” than with “woman,” while drawing the opposite inference for “homemaker.”)

In a similar vein, a 2016 study found that software used in the judicial system to predict who was at risk of reoffending was biased against African-Americans. Tegmark acknowledges these problems, while also pointing to what is perhaps a deeper conundrum: As AI technology progresses, it becomes increasingly difficult to discern why one particular output is produced instead of another. As Tegmark puts it: “If defendants wish to know why they were convicted, shouldn’t they have the right to a better answer than ‘We trained the system on lots of data, and this is what it decided?’” The same chapter looks at other down-to-earth concerns such as the military use of automated weaponry and the much-discussed impact of AI on labor and employment.

Things take a more philosophical turn in the book’s second half, as Tegmark ponders the long-term outlook for our machines and ourselves. The big question is, what happens when machines outperform us at everything? Here, Tegmark outlines a number of possible scenarios (they’re even compiled in a handy table) with names like “libertarian utopia,” “benevolent dictator,” “protector god,” “enslaved god,” “zookeeper” and so on. (In some of these scenarios, humans continue to flourish; in others, not so much).

The key, Tegmark says, is to make sure that any advanced AI we develop has goals that are aligned with our own. Here he cites Nick Bostrom’s paper clip doomsday scenario — in which a superintelligence programmed to make as many paper clips as possible starts killing humans to harvest their atoms to make even more paper clips — as well as various other possible disasters.) He also takes some time to explain how machines — chunks of metal — can have goals in the first place. The answer, he says, comes from basic physical principles. I won’t try to summarize the argument here, but it involves thermodynamics, complexity, and Darwinian evolution; the recent (and still controversial) work of Tegmark’s MIT colleague Jeremy England gets a nod.

Of course, no discussion of AI is complete without an examination of that most ancient of puzzles, the problem of consciousness — and Tegmark obliges, with a remarkably thorough run-through of today’s best guesses as to how our brains give rise to subjective experience. While some thinkers would object, Tegmark seems quite comfortable with the idea of the brain as a computing machine (an analogy that goes back at least to the dawn of modern computer science). While our brains are biological, thinking is said to be “substrate independent” — that is, a mind can do whatever it does regardless of what it’s made of. “In short,” writes Tegmark, “computation is a pattern in the spacetime arrangement of particles, and it’s not the particles but the pattern that really matters!” (Four of his eight chapters end with exclamation marks — but then, it is pretty exclamation-worthy stuff.)

As heady as some of the ideas are, “Life 3.0” is significantly more grounded than Tegmark’s previous book, “Our Mathematical Universe”; though sweeping and provocative, that book’s musings on multiple universes and platonic philosophy struck several reviewers as overly speculative. In contrast, both Science and Nature, and quite a few newspapers, have given “Life 3.0” positive reviews. To be sure, readers who have plowed through Bostrom’s 2014 book, “Superintelligence,” or Erik Brynjolfsson and Andrew McAfee’s “Race Against the Machine,” from 2011, will find some familiar material — and yet there’s plenty in “Life 3.0” that’s new and noteworthy. AI is poised to profoundly shape our lives — indeed, it has already begun to do so — and Tegmark is telling us, quite reasonably, that this is worth thinking very carefully about.

Dan Falk (@danfalk) is a science journalist and former Knight Science Journalism fellow based in Toronto. His books include “The Science of Shakespeare” and “In Search of Time.”

Comments are automatically closed one year after article publication. Archived comments are below.

“What isn’t data will be. If it won’t be, it’s data now.”–Geo Maglio, Norval