Preparedness Spending Exploded After 9/11. Is That Helping Now?

On the final day of 2001, fewer than four months after the September 11 terrorist attacks, President George W. Bush fielded a few questions from reporters near his ranch in Crawford, Texas. One of them asked him what kind of homeland security developments Americans could expect to see in the coming year. Bush did not begin by talking about airport security, or policing, or domestic surveillance, or any of the myriad ways that American society was transforming after 9/11. Instead, he started talking about public health. His administration’s priority in the coming year, he said, was “making sure the public health systems work.”

The attacks, which were followed just a week later by a series of anthrax-laced letters that killed five people and sickened 17 more, had highlighted major shortcomings in the country’s disconnected, long-underfunded public health infrastructure. So in early January 2002, according to an analysis from the Congressional Research Service, Bush approved $2.8 billion in spending on preparation for “potential biological, disease, and chemical threats,” including $940 million in emergency funding to be distributed to the country’s state and local health departments. In the years that followed, federal lawmakers would invest billions more in preparations for a public health emergency — including the possibility of a pandemic. According to one estimate, the federal government spent more than $61 billion on biodefense in the decade after the attacks, including general public health upgrades and more specialized preparations for an attack.

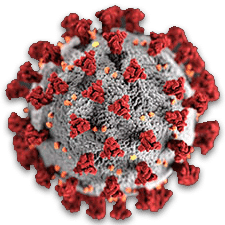

Now, nearly 20 years after 9/11, the United States finds itself once again facing a sweeping, unprecedented national emergency. Just this week, the country passed a grim milestone, as the death toll from Covid-19 surpassed that of 9/11.

The bungled response to the Covid-19 pandemic — including a failure to produce enough tests for the virus, shortages of critical medical supplies, understaffing at local health departments, and sluggish federal action overall — has highlighted, once again, profound vulnerabilities in the country’s public health system. As the number of infected Americans mounts, and as models increasingly point to a possibly dire near-future of hospital bed shortages, shortfalls in doctors and nurses, equipment scarcity, and abject triage, some policy experts are now wondering what happened to all that post-9/11 public health infrastructure funding, and why those investments are not helping the country now.

After 9/11, “we spent a lot of money on preparedness programs” said Wendy Mariner, a health law and policy scholar at Boston University. “The federal government has provided money to states and organizations to develop preparedness plans. There have been drills. And yet, we don’t seem to have what we really need, which is the supplies and the resources to take care of everyone.”

Edward Richards, a public health policy law expert at Louisiana State University, expressed similar sentiments. “For three or four years [after 9/11], maybe five years, the feds rolled out funding to local health departments,” he said. “They hired extra epidemiologists and some lawyers who knew something about this, but as soon as the grants were cut off and the government got interested in something else, all those people were fired.”

Many experts argue that, whatever the shortfalls the nation is now facing amid the novel coronavirus, the situation would be much worse were it not for many of the investments made in various aspects of public health in the nearly 20 years since the 9/11 attacks. Perhaps more money could have been spent on this program, or diverted to that agency, defenders suggest, but hindsight is always 20/20. Howard Koh, a professor at the Harvard T.H. Chan School of Public Health and former public health commissioner for the Commonwealth of Massachusetts, for example, suggested it was a mistake to question whether government spending after the attacks was over-allocated to, say, bioterrorism preparation, rather than to planning for a natural pandemic like the one now gripping the world. “One doesn’t want to be a Monday morning quarterback on that,” he said.

While perspectives vary, tracing the history of post-9/11 investments in public health does seem to reveal just how much the present response to Covid-19, for all its flaws, owes to that last nationwide crisis. At the same time, it also offers a stark warning on how political inattention, questionable spending choices, and shifting policy priorities still left the U.S. public health system unprepared for a pandemic — a threat that many experts knew was almost certain to arrive.

By the early 1990s, many public health departments around the country had become victims of their own success. Mass vaccination campaigns from the mid-20th century onward had all but eliminated some of the very diseases, including measles and mumps, that had helped make public health seem so essential in previous generations. Public health officials — tasked with monitoring disease spread, educating the public, and responding to acute outbreaks — had begun focusing more on chronic conditions like heart disease and cancer.

That started to change in 1992 with the publication of a major report from the Institute of Medicine, now known as the National Academy of Medicine, warning about the threat of new infectious diseases. An influenza pandemic, like the one that had killed 50 million people worldwide in 1918 and 1919, remained a possibility, the IOM report noted. Drug-resistant strains of tuberculosis were spreading. And a combination of population growth, land-use changes, and increased travel in places like Central Africa and East Asia had accelerated the emergence of new diseases.

The report set off alarms in the field and changed how public health experts thought about the contemporary threats they faced. “Their report,” said Ann Marie Kimball, an emeritus professor of epidemiology at the University of Washington School of Public Health, “began to galvanize public health around the idea that the [pandemic] threat was not over.”

Nearly a decade after that IOM analysis, however, public health infrastructure was still in bad shape. In March 2001 — just six months before the 9/11 attacks — the U.S. Centers for Disease Control and Prevention sent a report to the Senate on the state of the nation’s public health system. The news was grim: The country’s more than 3,000 state and local public health departments, they wrote, were severely underfunded. As of 1997, nearly 80 percent of local health departments were headed by someone who lacked a graduate degree in public health. Only one-third of Americans, the report said, were “effectively served” by their public health department. Many health departments, which among other things were expected to spearhead the tracking and reporting of infectious diseases, lacked reliable internet access and other basic communication tools.

One local health department, the CDC report noted, “confessed to not reporting diseases because doing so would have meant a long-distance phone call.”

Then came al-Qaeda and 9/11. CDC experts rushed to New York City to help track health fallout of the collapsed Twin Towers. A week later, someone began sending anthrax-laced letters to prominent media and political figures. (The culprit has never been confirmed, but evidence points to a government scientist who died by suicide in 2008.) Frightened people overwhelmed state health departments and laboratories with suspicious powders.

This complement of threats produced “a dramatic turning point for public health,” said Koh, who also served as a senior public health official in Barack Obama’s administration from 2009 to 2014. The attacks, Koh told Undark, “prompted a national awakening that major public health investments were mandatory to protect people in the future against disasters ranging from bioterrorism to hurricanes to infectious disease pandemics, like what we’re witnessing now.”

This realization, Koh said, was part of a larger realignment around how the country thought about disasters. “The previous paradigm was, disasters are unusual and it’s not part of what we do. And the new paradigm was, disasters are inevitable, they will always come, and we have to be as ready as possible,” he said. Public health began to transition into being what Koh and others call “a preparedness field.”

That transition was propelled by billions of dollars of federal funding. The Public Health Emergency Preparedness program, launched in 2002, received $940 million in its first year of operation. The money went to the CDC, which distributed it to local and state officials. The Hospital Preparedness Program, designed to help hospitals prepare for a surge of cases during a national emergency, launched the next year with an initial $515 million of funding.

Federal officials also took a small, new initiative to warehouse medical supplies that could be useful during an emergency — the National Pharmaceutical Stockpile — increased its budget by a full order of magnitude, and later renamed it the Strategic National Stockpile. That stockpile has been an important source of supplies during the Covid-19 outbreak, though officials now warn its store of critical protective gear, including gloves and N95 masks, is nearly depleted.

The changes weren’t only reflected in budget lines. Following the attacks, the CDC enlisted legal scholars to draft legislation codifying sweeping state powers that could be enacted in times of public health emergency. And they did so over the vigorous opposition of some civil liberties advocates, who argued that the legislation gave too much authority to state officials. (At least 20 states implemented versions of that legislation anyway, which may get some of its first major tests in the coming weeks.)

In total the country began to put billions of dollars more into measures to prepare the country for a biological attack from a rare pathogen like anthrax or smallpox, as well as toxins like ricin, a poison found in castor beans.

That bioterrorism money was spread across the federal government, including the CDC, and much of it was used for big national initiatives. Project BioShield, for example, launched in 2004 with a 10-year $5.6 billion commitment, and began stockpiling vaccines and treatments for things like anthrax and botulinum toxin.

Biodefense money also flowed into local health departments, which suddenly found themselves flush with cash. Newspaper reports from the early 2000s, as well as interviews with public health officials excerpted in a 2006 academic book, “Are We Ready: Public Health Since 9/11,” suggest that departments directed much of this funding toward core needs: They upgraded aging laboratory equipment. They bought new and better communications tools. They began doing more planning exercises with emergency responders. And they hired epidemiologists and other critical staff.

It was not just 9/11 and the anthrax attack that propelled this surge. An outbreak of SARS – another coronavirus pathogen – in East Asia in 2003 raised global fears of a pandemic. The Bush administration also helped pass legislation in 2006 increasing influenza pandemic preparedness, and pushed health departments to write out plans for the case of a severe outbreak. Many of these systems then got test runs during the H1N1 flu outbreak in 2009, which prompted its own round of new investments in public health preparedness.

Jeffrey Levi, a public health policy expert at George Washington University and, from 2005 to 2015, the director of the influential advocacy group Trust for America’s Health, said those investments are now paying some dividends. “Give credit where credit is due,” he said. “In addition to the post-9/11 investments, the pandemic preparedness work that the George W. Bush administration did was incredibly important.”

Since 2001, Koh said “we’ve had infrastructure built, we’ve had offices created, we’ve had plans put forward, we’ve had drills and communications and exercises.”

“We are much better prepared than we were 20 years ago,” he added. “There’s just no doubt about it.”

Still, within just a few years of 9/11, public health officials and researchers were raising concerns that the focus on bioterrorism was actually detracting from core public health research and infrastructure — and potentially endangering Americans.

Tensions flared around a federal plan, supported by Vice President Dick Cheney, to immunize hundreds of thousands of soldiers and civilians against smallpox in the run-up to the U.S. invasion of Iraq in 2003. Smallpox vaccination can pose serious risks, and the disease had been eradicated, with samples remaining in just a handful of high-security laboratories in the U.S. and Russia. Nonetheless, concerned about the possibility of a smallpox attack leading to a national pandemic, officials began pushing for immunization.

“It was nuts,” said George Annas, a public health scholar at Boston University. “There was no smallpox in the world.”

By January of the following year, more than 500,000 military personnel had been vaccinated, along with some 39,000 civilian health care workers.

According to the CDC, 97 civilians experienced severe complications associated with the vaccine.

Those kinds of initiatives also strained staff at local health departments, where officials, already overextended, suddenly found themselves managing bioterrorism response on top of all their other responsibilities. In 2004, for example, the public health director in New Haven, Connecticut, told a reporter from the Hartford Courant that he was spending 70 to 80 percent of his time on bioterrorism preparedness work. “I wish I could be doing chronic disease prevention, even infectious disease prevention, spending more time working on the social aspects of mortality and morbidity,” he said, “which is what public health should be doing.”

An essay that year by a public health expert and two medical doctors, published in the American Journal of Public Health, was more blunt: “The present expansion of bioterrorism preparedness programs,” they wrote, would “continue to squander health resources.” Such programs, they concluded, “have been a disaster for public health.”

Researchers expressed similar concerns. In 2005, a group of more than 750 microbiologists signed an open letter to the National Institutes of Health, complaining that the new focus on extremely rare threats like tularemia, anthrax, and plague was siphoning resources away from critical research. “The diversion of research funds from projects of high public-health importance to projects of high biodefense but low public-health importance represents a misdirection of NIH priorities and a crisis for NIH-supported microbiological research,” they wrote.

Indeed, while pathogens like influenza reliably kill thousands of Americans every year, the U.S. has had only two bioterror attacks in modern times – the anthrax attacks, and an incident in 1984, when a fringe religious sect poisoned 10 salad bars in Oregon with Salmonella bacteria.

Such sentiments reflected a growing tension between federal priorities – focused on terrorism – and the needs of local health departments, said David Rosner, the co-director of the Center for the History and Ethics of Public Health at Columbia University and the author, with Gerald Markowitz, of “Are We Ready?” Speaking of the post-9/11 period, Rosner said that “the public health community in some sense saw moment as a means of buttressing a field that had gone into steep decline over the course of the previous three decades.”

“This emergency funding that the government was providing for smallpox inoculations, for emergency responders, was seen as a possible means by which a deeply defunded and underfunded field, mainly state and local departments of health, would receive funding,” Rosner said. Departments hoped they “could use this funding to buck up not so much their emergency response but their general infrastructure.”

Public health officials, Rosner said, “really thought, well, okay, smallpox is a crazy idea, but we’ll be able to buck up our systems” with funding for these kinds of programs. Instead, Rosner said, federal officials often insisted that money go to bioterrorism-specific investments, rather than infrastructural upgrades that would be useful in any kind of disaster.

Even as billions flowed into biodefense, core funding for public health soon flagged. In the economic decline of the early 2000s, many states had cut their public health budgets — in at least one case because lawmakers thought the new federal funding would cover the gap. A 2007 analysis, authored by Levi and several colleagues, found that, while CDC spending on terrorism preparation had increased nearly 10-fold since the attacks, its budget for core functions – like tackling infectious diseases – had actually dropped.

|

Got questions or thoughts to share on Covid-19? |

During the recession that began in 2008, public health departments across the country were also forced to cut thousands of staff. And other long-term investments, like an emergency fund for pandemics and other public health disasters set up in 1983, simply never received support after 9/11, or after any other subsequent disaster. The account was last funded in 1999, and has been sitting empty for eight years. .

Federal funding for public health emergency preparation began to wane, too, and the reductions have continued through today. The $940 million Public Health Emergency Preparedness program and the Hospital Preparedness Program, for example, have experienced cuts of 30 percent and 50 percent, respectively, since their peak funding after 9/11. (Adjusting for inflation, the decline is more like 50 percent for PHEP, and 65 for Hospital Preparedness). “That was meant to be a steady funding stream to support new infrastructure,” Levi said. “But that money has been losing its value over time.”

Levi and a group of colleagues estimated last year that, with a designated $4.5 billion annual investment, the U.S. could build a strong, well prepared public health system. Such a system, Levi and his colleagues argue, would, at a minimum, make sure every public health department had the staffing, funding, and expertise to surveil community health, prepare capacity for emergencies, and build partnerships with other agencies and community organizations. “Outside the context of an emergency” like the Covid-19 pandemic, Levi said, that “sounded like a huge amount of money.” Last week, President Donald J. Trump signed a $2 trillion pandemic stimulus package. “Now, it doesn’t sound so bad.”

Talk to public health officials long enough, and you will usually hear some version of the following complaint: When we do our job well, fewer people get sick. And when fewer people get sick, people forget why we exist in the first place. “If they’re really successful,” Kimball said of public health officials and professionals, “they don’t make a lot of noise.”

That cycle of disaster, investment, and simmering neglect has frustrated public health experts for years. “These disasters are inevitable,” Koh said. “They will always come. When we get through this one there will be another one soon, and we just have to be more dedicated as a society to investing in the long term for prevention, public health, and preparedness.”

Not everyone is optimistic about that future.

Rosner pointed out that, while policymakers have kept up the flow of money for some national security measures after 9/11, many of the public health provisions put in place were “temporary fixes.”

The current crisis, he worries, will follow a similar pattern.

“It’ll pass, and then we’ll forget about it again,” he said. “That’s my prediction.”