Rifling Through the Evidence: Uncertainty in Firearms Analysis

In 2015, Peter Stout, head of the Houston Forensic Science Center, began administering a test that was designed to not look like a test. Like many crime labs in the United States, analysts at the center routinely perform forensic firearms identification, meaning they inspect crime scene evidence — in this instance, the microscopic patterns on bullets and cartridge casings. By comparing these markings, the examiners aim to pair the patterns with marks made when firing a specific gun.

Validation studies, or so-called black box studies — many conducted in the wake of assessments by scientific advisory groups — attempt to measure how accurately examiners linked casings and bullets to specific guns; these studies have consistently found low error rates. But critics have pointed out that examiners in such studies self-select; they volunteered to take a proficiency test. This leaves open key questions: Did the examiners behave differently when they knew they were taking a test? And if so, did that affect their accuracy?

The test at the Houston Forensic Science Center tried a different tack. The researchers slipped mock samples in with routine casework. Real-world evidence doesn’t always come with an answer key, so these blinded samples with known answers also allowed researchers to check the examiner’s conclusions. The goal was to add a quality-control measure, and also attempt to do something that some practitioners had considered practically impossible: to prevent examiners from knowing that they were being tested. As a newsletter from the lab explained in 2017, “This means that at any given time an analyst has no idea whether they are working on a real case or taking a test.”

“It’s why our data are so interesting to everybody,” Stout said in an interview, “because ours are blind.” Stout and several colleagues published the preliminary dataset in 2022 without much fanfare. But a more recent follow-up paper unpacking and expanding a small pocket of the original data reignited the debate over black box ballistics studies.

In 2025, in collaboration with Stout and his colleagues, Nicholas Scurich, a professor of psychology and criminology at the University of California, Irvine, published a paper looking at the Houston data. Scurich was interested because the data were blinded, and he wanted to take a closer look at a subset of the data: cases when the Houston firearms examiners had not reached a conclusion. Even though the test was supposed to be blinded, examiners knew test cases could be inserted into the workflow, and sometimes they discovered those mock samples. When they did so, the results changed: Examiners appeared less likely to make identifications or eliminations, labeling a higher percentage of cases as inconclusive. (These so-called “inconclusives,” in turn, potentially alter the error rate because, as the 2025 paper explained, examiners “can avoid risking an error on a test item by calling inconclusive.”) As Stout put it, “There seems to be something different going on when they know they’re being tested versus when they don’t.”

While there had been hints that examiners behaved differently when they knew they were being tested, Scurich said, practitioners sometimes passed this off as speculation. “Their response was like, ‘That’s just kind of indirect evidence, that’s not direct proof.’” But his team’s analysis of the blinded data from Houston, he said, offered “direct proof.”

But others disputed the findings. In an email, Max Guyll, an associate professor of psychology at Arizona State University, wrote: “There are a number of issues that prevent drawing firm conclusions from those data.” Guyll and Todd Weller, a forensic scientist, declined to speak on-the-record about the specifics. But, in an email, Weller said important context was missing from his quoted testimony in the 2025 paper, and suggested that comparing blind testing conducted nationally, among numerous agencies, with specific design protocols, to the testing done within one lab “is comparing apples to oranges.” (Erich D. Smith and Eugene “Gene” Peters, at the FBI, both of whom have authored black box studies, did not respond to emailed requests for comment; the agency’s Office of Public Affairs declined to comment.) In an email, Michael A. Peat, the editor-in-chief of the journal where the 2025 paper was published, said the journal had initiated an investigation due to concerns raised about the paper.

“There’s been so much controversy over measuring firearms’ error rates, that this is just more fuel for that fire.”

Scurich, for his part, said the forthcoming criticisms of his 2025 analysis did not amount to much; he did not respond to an email asking about the investigation.

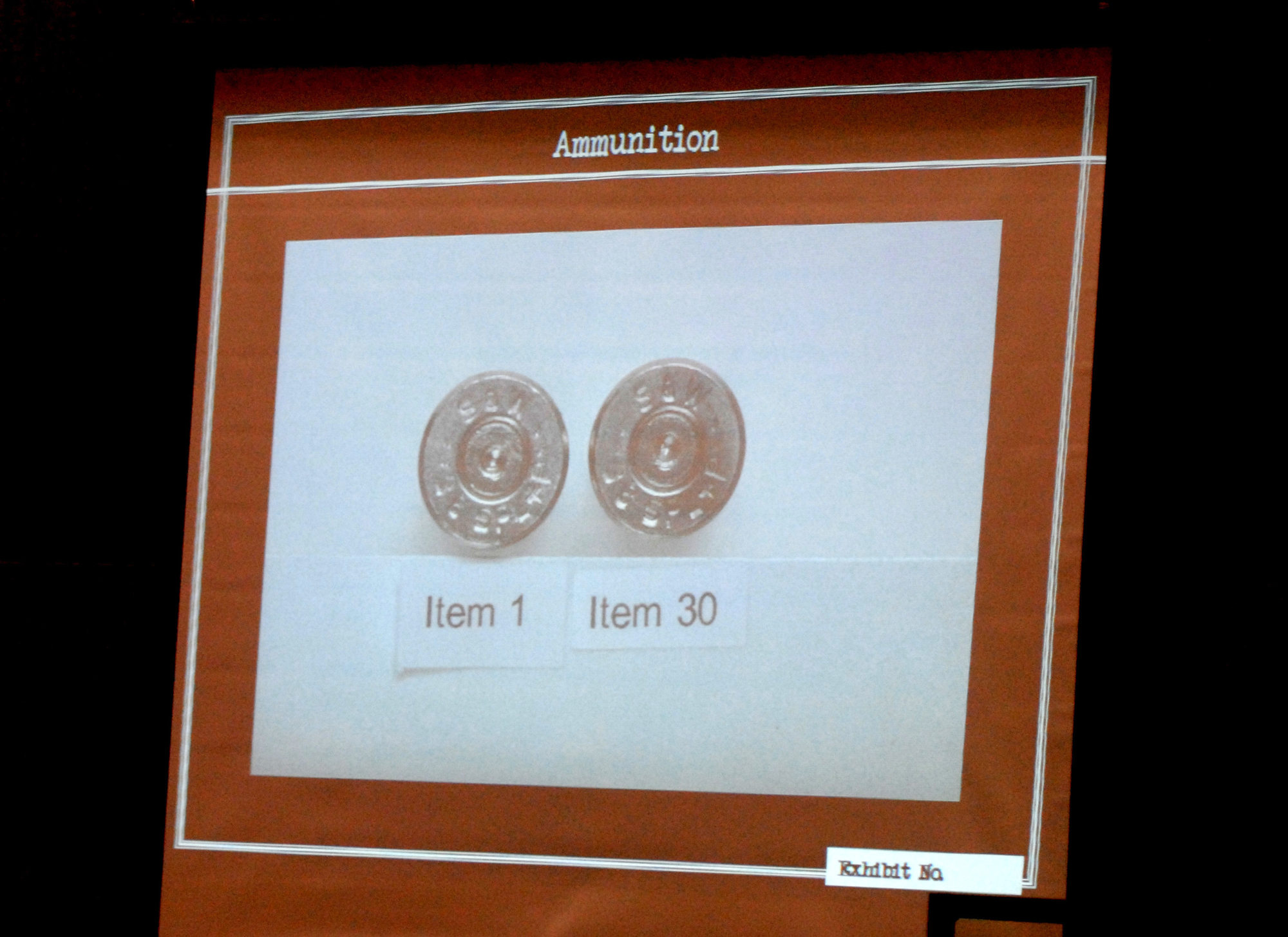

The debate comes at a time when many high-profile shootings involve ballistic comparisons that are taken as definitive evidence, pointing, for instance, to the weapon that killed Charlie Kirk, the right-wing political activist, or the partially 3D-printed firearm used to kill Brian Thompson, the CEO of UnitedHealthcare. Statisticians have pointed out other errors in ballistics research, too, and critics say the criteria used for comparing the markings are subjective.

To others not involved in the study, the 2025 analysis led by Scurich singled out one aspect of the scientific method: blinding, a widespread safeguard against bias. In doing so, Jeff Kukucka, a legal psychologist at Towson University, said the analysis scratched the surface of deeper problems in solving crimes where the ground truth remained unknown. “It’s very difficult in the real world, if not impossible, to measure accuracy because we don’t know what the true state of affairs is.”

To Kukucka, the 2025 study’s findings pointed to the importance of experimental design. “To me, this should be entirely uncontroversial because, again, this isn’t a new phenomenon, right? This is old news,” he said. “But, at the same time, there’s been so much controversy over measuring firearms’ error rates, that this is just more fuel for that fire.”

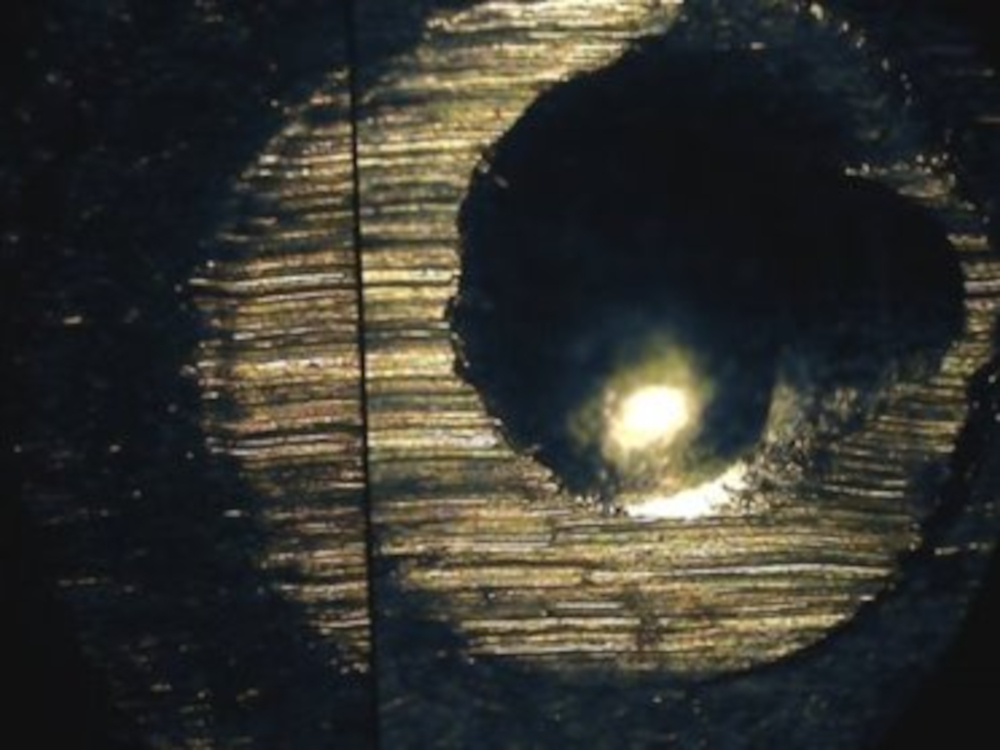

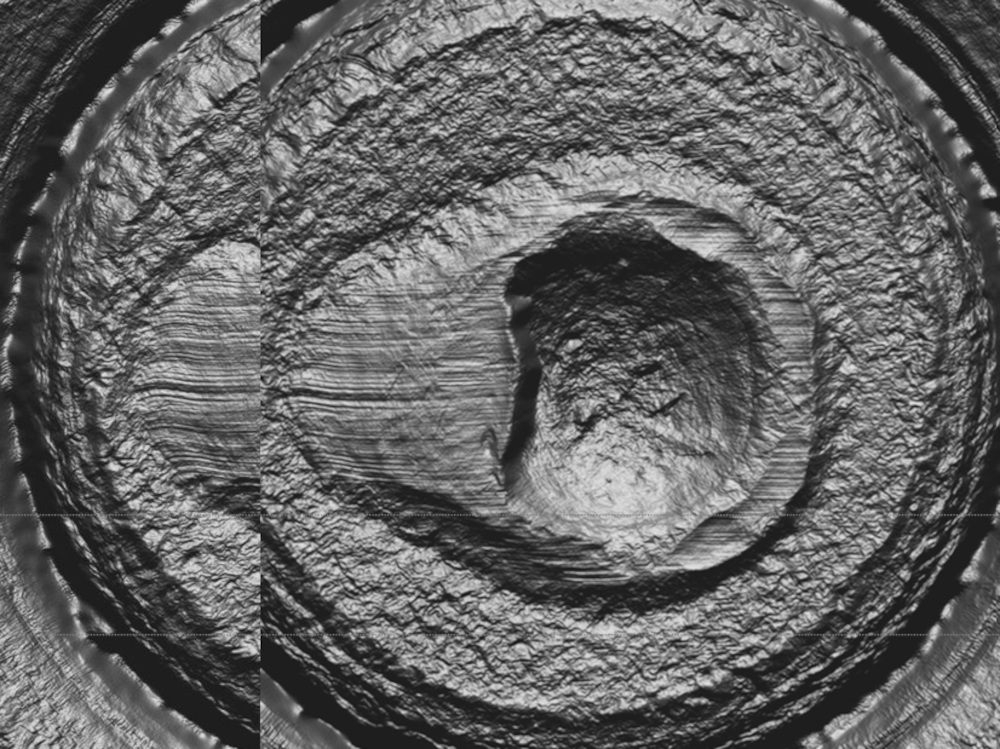

The concept of using microscopic contours to link ballistic evidence, such as bullets, to a specific firearm has been around for about a century. When someone pulls the trigger, the firing pin springs forward, leaving an impression; similarly, as the bullet spirals down the barrel of a gun, its force creates markings on the cartridge case, as does the ejection of a spent cartridge.

When today’s forensic examiners attempt to identify which firearm fired a particular cartridge or bullet, they typically use a comparison microscope. And they generally come to one of three conclusions: an identification — meaning they’ve found a match; an elimination — a nonmatch; or “inconclusive.”

“According to the firearm examiners, inconclusive is never a wrong response, because you’re not saying it’s a match or not a match,” said Scurich. “You’re just saying, ‘I can’t tell.’”

Earlier work suggested examiners made inconclusive calls more often in formal studies. A 2022 survey conducted by Scurich and colleagues suggested that, during routine casework, firearm examiners deemed about 20 percent of cases inconclusive. Based on prior studies, Scurich and his colleagues noted in their 2025 paper that when the examiners knew they were part of a study, the inconclusive rates topped 50 percent, suggesting that they were less likely to reach a conclusion. If nothing else, the disparity suggested something obvious: Participants in a study behave differently than they normally would, demonstrating a phenomenon known as the Hawthorne effect — the bias introduced by someone knowing they are being studied. But the 2025 study sought to put a hard figure on the phenomena using the blinded data from Houston.

The examiners in Houston knew they could be getting a test, but sometimes the blinding failed. For both bullets and cartridge casings tests, three out of 11 examiners figured out which were the mock samples, according to the new analysis, in effect unblinding what the lab had designed as a blind sample. (The test had been administered as a quality control measure. The Houston lab did not actually design the blinding to fail, as might be done in an experiment deliberately designed to test the hypothesis of whether or not examiners could detect blinded samples. In one instance, according to Stout, an examiner literally sniffed out the test, saying the firearm smelled distinct, too old to be a new gun in a contemporaneous sample, perhaps suggesting the difficulty of creating blinds that resemble actual casework.) In one paper describing the procedure, the lab’s head had said if they identified a test item (i.e., the blinding failed and the “test” case did not resemble real casework), they would be rewarded with a gift card. If they erroneously suspected a real case was a test, they had to pay the lab director a dollar, which, he says, some did in pennies.

In one instance, according to Stout, an examiner literally sniffed out the test, saying the firearm smelled distinct, too old to be a new gun in a contemporaneous sample.

But what Scurich and his co-authors’ 2025 paper claimed to show that when examiners realized they were reviewing a test case, they were 43.5 percent more likely to say “inconclusive.” It provided concrete evidence of what many suspected — that the reported error rates in black box studies were not reliable, and that participants who knowingly engaged in a proficiency test tended to reach fewer conclusions — only, Scurich said, the analysis gave some statistical ammunition to the argument. “This Hawthorne effect is ubiquitous,” he said. “It’s just a part of, I don’t know, the laws of human behavior. When people know they’re being observed or being studied, they behave differently. And I don’t know of any theoretical reason why firearms examiners would be exempt from this.”

While the data showed how behavior apparently changed under scrutiny, it left open the question about work that was routinely being done in crime labs all across the country. If the error rates in tests were skewed because of inconclusives, how accurate were firearms examiners in practice?

The field of forensics has long been criticized for being subjective. In 2009, a landmark National Academy of Sciences report questioned whether firearms examiners could definitively do what they claim — and, in particular, how often they made mistakes.

Such criticism drew attention to a problem that had been hiding in plain sight and stimulated additional studies that, in some researchers’ eyes, were sorely needed. Itiel Dror is a cognitive neuroscientist who studies cognitive bias and who has previously worked with Scurich. Rather than merely pointing out the effects of a blinding, in a letter to the editor published in the Journal of Forensic Sciences, Dror wrote that the Houston data did something more substantial: “it refutes the validity of the entire enterprise of the black-box studies as they have been conducted to date.”

Sarah Chu, a forensic science policy expert at the Perlmutter Center for Legal Justice at Cardozo Law, agreed that the results would have a larger impact, affecting research and also real-world cases in the criminal-legal system. In an email to Undark, she wrote: “This study is likely to be influential — and uncomfortable — because it challenges how forensic reliability is commonly evaluated by courts.”

The 2025 analysis was part of a broader research trend that focused on forensics’ methodological issues. Maria Cuellar, a statistician who works in the criminology department at the University of Pennsylvania, was among a team of researchers who examined 28 validation studies, some of which attempted to establish firearms examiner error rates. But the group found “methodological flaws that are so grave that they render the studies invalid.” (Or as she put it in an interview, “they’re just all designed very poorly from the perspective of study design and statistical methodology.”)

One fundamental shortcoming involved considering inconclusive findings as correct answers. “The problem is, if you say ‘inconclusive,’ it’s hard to know how to treat that,” Cuellar said. “Did you get the answer right or wrong? And in many of these studies, they say, ‘No, they got it right.’ Which seems crazy because, if you were a student taking my test and you answered ‘inconclusive’ to my questions, I’d be like, ‘That’s not the right answer. You obviously don’t know the answer to this question.’”

Hypothetically speaking, a given study might report a false positive rate of 1 percent, but if one were to recalculate and treat all the inconclusives as incorrect, the error rate could instead amount to a coin toss — or a 50 percent chance of having a particular outcome. But Cuellar said she does not know if the error rate is 50 percent. It remains unknown — because of several factors, which include basic questions around the experimental design, a study’s sample size, and its representativeness. “If you take each one of those separately, that’s a big problem,” she said. “But altogether, they might be even worse.” The error rates reported in black-box studies might be a few points off from reality, or, she said, they could give a completely misleading impression.

“This study is likely to be influential — and uncomfortable — because it challenges how forensic reliability is commonly evaluated by courts.”

Moreover, Cuellar argued in a 2025 paper, classifying non-answers as inconclusives, rather than an elimination, could arbitrarily lower the false negative rate — that is, when an examiner erroneously rules out a correct answer. In that paper, Cuellar noted that both false positives and false negatives were reported in fewer than half of the studies she examined. Some scholars suspect false negatives may actually be more common than false positives, but there is an institutional bias. A bedrock principle of the American criminal legal system is supposed to be the presumption of innocence, which means “the focus has very much mostly been on false positives,” as scholars like Brandon Garrett, a professor at Duke University School of Law who has also worked on papers with Scurich, have argued. But false negatives potentially mean missed connections and falsely excluding evidence that could have proved someone guilty.

Alicia Carriquiry, a statistician at Iowa State University, said that Cuellar’s work demonstrates that how researchers treat inconclusives has a tremendous impact on calculating rates of both false positives and false negatives. So much so, she said in an interview, “we don’t know error rates.” But Carriquiry emphasized that firearms examination is not a junk science. “When you use appropriate methods, like high resolution microscopy and the appropriate statistical methods and so on, you actually do get good results.” She added: “The subjective approach to evaluation is the problem, not the absence of marks.”

Statisticians who are critical of the techniques say that researchers need to establish error rates; the existing studies arguably have not done that. Cuellar acknowledges that the overall point is a subtle one: “It’s not saying, ‘This is bad.’ It’s saying, ‘We don’t know how bad this is.’ It could be very bad. But also, it just gives us a false sense of security and it’s misleading and we just need to know — we need to do better, better studies.”

Stout, the head of the Houston lab, said he remained somewhat circumspect about the implications of the 2025 analysis led by Scurich. The data were unique, and there seemed to be something different in the inconclusives when people knew they were being tested. But Stout acknowledged its limitations: The test had been set up for quality control, and it had a small pool of participants, so the results might not generalize to what he estimates is 900 examiners nationwide. Plus, he said, it was difficult to blind the mock samples. “My quality division has been playing with how to create bullets that actually look like bullets that would come from a case that went through a person and hit a wall and aren’t just pretty bullets that you would pull out of the shooting tank.”

In recent years, some courts have taken note of the ongoing dispute, ruling that jurors should not hear testimony from firearms examiners. But other judges continue to defer to precedent, allowing examiners to testify. At the same time, federal funders canceled grants specifically for these types of analyses in 2024, Carriquiry said. Other funding for forensic research has been diminished or dried up.

Nonetheless, such evidence — and the reported error rates about such evidence — is often believable to jurors and thus has real-world implications. As Scurich argues, it can mean the difference between life and death. “Gun violence is a problem. It’s very common. And so it would be very nice to have investigative techniques that could help solve these crimes,” he said. “But I think going into court and saying ‘It’s a match and our studies show that we almost never make errors’ is not very defensible.”